Monday, April 6. 2020

Userdir URLs like https://example.org/~username/ are dangerous

I would like to point out a security problem with a classic variant of web space hosting. While this issue should be obvious to anyone knowing basic web security, I have never seen it being discussed publicly.

Some server operators allow every user on the system to have a personal web space where they can place files in a directory (often ~/public_html) and they will appear on the host under a URL with a tilde and their username (e.g. https://example.org/~username/). The Apache web server provides such a function in the mod_userdir module. While this concept is rather old, it is still used by some and is often used by universities and Linux distributions.

From a web security perspective there is a very obvious problem with such setups that stems from the same origin policy, which is a core principle of Javascript security. While there are many subtleties about it, the key principle is that a piece of Javascript running on one web host is isolated from other web hosts.

To put this into a practical example: If you read your emails on a web interface on example.com then a script running on example.org should not be able to read your mails, change your password or mess in any other way with the application running on a different host. However if an attacker can place a script on example.com, which is called a Cross Site Scripting or XSS vulnerability, the attacker may be able to do all that.

The problem with userdir URLs should now become obvious: All userdir URLs on one server run on the same host and thus are in the same origin. It has XSS by design.

What does that mean in practice? Let‘s assume we have Bob, who has the username „bob“ on exampe.org, runs a blog on https://example.org/~bob/. User Mallory, who has the username „mallory“ on the same host, wants to attack Bob. If Bob is currently logged into his blog and Mallory manages to convince Bob to open her webpage – hosted at https://example.org/~mallory/ - at the same time she can place an attack script there that will attack Bob. The attack could be a variety of things from adding another user to the blog, changing Bob‘s password or reading unpublished content.

This is only an issue if the users on example.org do not trust each other, so the operator of the host may decide this is no problem if there is only a small number of trusted users. However there is another issue: An XSS vulnerability on any of the userdir web pages on the same host may be used to attack any other web page on the same host.

So if for example Alice runs an outdated web application with a known XSS vulnerability on https://example.org/~alice/ and Bob runs his blog on https://example.org/~bob/ then Mallory can use the vulnerability in Alice‘s web application to attack Bob.

All of this is primarily an issue if people run non-trivial web applications that have accounts and logins. If the web pages are only used to host static content the issues become much less problematic, though it is still with some limitations possible that one user could show the webpage of another user in a manipulated way.

So what does that mean? You probably should not use userdir URLs for anything except hosting of simple, static content - and probably not even there if you can avoid it. Even in situations where all users are considered trusted there is an increased risk, as vulnerabilities can cross application boundaries. As for Apache‘s mod_userdir I have contacted the Apache developers and they agreed to add a warning to the documentation.

If you want to provide something similar to your users you might want to give every user a subdomain, for example https://alice.example.org/, https://bob.example.org/ etc. There is however still a caveat with this: Unfortunately the same origin policy does not apply to all web technologies and particularly it does not apply to Cookies. However cross-hostname Cookie attacks are much less straightforward and there is often no practical attack scenario, thus using subdomains is still the more secure choice.

To avoid these Cookie issues for domains where user content is hosted regularly – a well-known example is github.io – there is the Public Suffix List for such domains. If you run a service with user subdomains you might want to consider adding your domain there, which can be done with a pull request.

Some server operators allow every user on the system to have a personal web space where they can place files in a directory (often ~/public_html) and they will appear on the host under a URL with a tilde and their username (e.g. https://example.org/~username/). The Apache web server provides such a function in the mod_userdir module. While this concept is rather old, it is still used by some and is often used by universities and Linux distributions.

From a web security perspective there is a very obvious problem with such setups that stems from the same origin policy, which is a core principle of Javascript security. While there are many subtleties about it, the key principle is that a piece of Javascript running on one web host is isolated from other web hosts.

To put this into a practical example: If you read your emails on a web interface on example.com then a script running on example.org should not be able to read your mails, change your password or mess in any other way with the application running on a different host. However if an attacker can place a script on example.com, which is called a Cross Site Scripting or XSS vulnerability, the attacker may be able to do all that.

The problem with userdir URLs should now become obvious: All userdir URLs on one server run on the same host and thus are in the same origin. It has XSS by design.

What does that mean in practice? Let‘s assume we have Bob, who has the username „bob“ on exampe.org, runs a blog on https://example.org/~bob/. User Mallory, who has the username „mallory“ on the same host, wants to attack Bob. If Bob is currently logged into his blog and Mallory manages to convince Bob to open her webpage – hosted at https://example.org/~mallory/ - at the same time she can place an attack script there that will attack Bob. The attack could be a variety of things from adding another user to the blog, changing Bob‘s password or reading unpublished content.

This is only an issue if the users on example.org do not trust each other, so the operator of the host may decide this is no problem if there is only a small number of trusted users. However there is another issue: An XSS vulnerability on any of the userdir web pages on the same host may be used to attack any other web page on the same host.

So if for example Alice runs an outdated web application with a known XSS vulnerability on https://example.org/~alice/ and Bob runs his blog on https://example.org/~bob/ then Mallory can use the vulnerability in Alice‘s web application to attack Bob.

All of this is primarily an issue if people run non-trivial web applications that have accounts and logins. If the web pages are only used to host static content the issues become much less problematic, though it is still with some limitations possible that one user could show the webpage of another user in a manipulated way.

So what does that mean? You probably should not use userdir URLs for anything except hosting of simple, static content - and probably not even there if you can avoid it. Even in situations where all users are considered trusted there is an increased risk, as vulnerabilities can cross application boundaries. As for Apache‘s mod_userdir I have contacted the Apache developers and they agreed to add a warning to the documentation.

If you want to provide something similar to your users you might want to give every user a subdomain, for example https://alice.example.org/, https://bob.example.org/ etc. There is however still a caveat with this: Unfortunately the same origin policy does not apply to all web technologies and particularly it does not apply to Cookies. However cross-hostname Cookie attacks are much less straightforward and there is often no practical attack scenario, thus using subdomains is still the more secure choice.

To avoid these Cookie issues for domains where user content is hosted regularly – a well-known example is github.io – there is the Public Suffix List for such domains. If you run a service with user subdomains you might want to consider adding your domain there, which can be done with a pull request.

Thursday, June 15. 2017

Don't leave Coredumps on Web Servers

Coredumps are a feature of Linux and other Unix systems to analyze crashing software. If a software crashes, for example due to an invalid memory access, the operating system can save the current content of the application's memory to a file. By default it is simply called

Coredumps are a feature of Linux and other Unix systems to analyze crashing software. If a software crashes, for example due to an invalid memory access, the operating system can save the current content of the application's memory to a file. By default it is simply called core.While this is useful for debugging purposes it can produce a security risk. If a web application crashes the coredump may simply end up in the web server's root folder. Given that its file name is known an attacker can simply download it via an URL of the form

https://example.org/core. As coredumps contain an application's memory they may expose secret information. A very typical example would be passwords.PHP used to crash relatively often. Recently a lot of these crash bugs have been fixed, in part because PHP now has a bug bounty program. But there are still situations in which PHP crashes. Some of them likely won't be fixed.

How to disclose?

With a scan of the Alexa Top 1 Million domains for exposed core dumps I found around 1.000 vulnerable hosts. I was faced with a challenge: How can I properly disclose this? It is obvious that I wouldn't write hundreds of manual mails. So I needed an automated way to contact the site owners.

Abusix runs a service where you can query the abuse contacts of IP addresses via a DNS query. This turned out to be very useful for this purpose. One could also imagine contacting domain owners directly, but that's not very practical. The domain whois databases have rate limits and don't always expose contact mail addresses in a machine readable way.

Using the abuse contacts doesn't reach all of the affected host operators. Some abuse contacts were nonexistent mail addresses, others didn't have abuse contacts at all. I also got all kinds of automated replies, some of them asking me to fill out forms or do other things, otherwise my message wouldn't be read. Due to the scale I ignored those. I feel that if people make it hard for me to inform them about security problems that's not my responsibility.

I took away two things that I changed in a second batch of disclosures. Some abuse contacts seem to automatically search for IP addresses in the abuse mails. I originally only included affected URLs. So I changed that to include the affected IPs as well.

In many cases I was informed that the affected hosts are not owned by the company I contacted, but by a customer. Some of them asked me if they're allowed to forward the message to them. I thought that would be obvious, but I made it explicit now. Some of them asked me that I contact their customers, which again, of course, is impractical at scale. And sorry: They are your customers, not mine.

How to fix and prevent it?

If you have a coredump on your web host, the obvious fix is to remove it from there. However you obviously also want to prevent this from happening again.

There are two settings that impact coredump creation: A limits setting, configurable via

/etc/security/limits.conf and ulimit and a sysctl interface that can be found under /proc/sys/kernel/core_pattern.The limits setting is a size limit for coredumps. If it is set to zero then no core dumps are created. To set this as the default you can add something like this to your

limits.conf:* soft core 0The sysctl interface sets a pattern for the file name and can also contain a path. You can set it to something like this:

/var/log/core/core.%e.%p.%h.%tThis would store all coredumps under

/var/log/core/ and add the executable name, process id, host name and timestamp to the filename. The directory needs to be writable by all users, you should use a directory with the sticky bit (chmod +t).If you set this via the proc file interface it will only be temporary until the next reboot. To set this permanently you can add it to

/etc/sysctl.conf:kernel.core_pattern = /var/log/core/core.%e.%p.%h.%tSome Linux distributions directly forward core dumps to crash analysis tools. This can be done by prefixing the pattern with a pipe (|). These tools like apport from Ubuntu or abrt from Fedora have also been the source of security vulnerabilities in the past. However that's a separate issue.

Look out for coredumps

My scans showed that this is a relatively common issue. Among popular web pages around one in a thousand were affected before my disclosure attempts. I recommend that pentesters and developers of security scan tools consider checking for this. It's simple: Just try download the

/core file and check if it looks like an executable. In most cases it will be an ELF file, however sometimes it may be a Mach-O (OS X) or an a.out file (very old Linux and Unix systems).Image credit: NASA/JPL-Université Paris Diderot

Posted by Hanno Böck

in English, Gentoo, Linux, Security

at

11:20

| Comments (0)

| Trackback (1)

Defined tags for this entry: core, coredump, crash, linux, php, segfault, vulnerability, webroot, websecurity, webserver

Tuesday, January 26. 2016

Safer use of C code - running Gentoo with Address Sanitizer

Update: When I wrote this blog post it was an open question for me whether using Address Sanitizer in production is a good idea. A recent analysis posted on the oss-security mailing list explains in detail why using Asan in its current form is almost certainly not a good idea. Having any suid binary built with Asan enables a local root exploit - and there are various other issues. Therefore using Gentoo with Address Sanitizer is only recommended for developing and debugging purposes.

Address Sanitizer is a remarkable feature that is part of the gcc and clang compilers. It can be used to find many typical C bugs - invalid memory reads and writes, use after free errors etc. - while running applications. It has found countless bugs in many software packages. I'm often surprised that many people in the free software community seem to be unaware of this powerful tool.

Address Sanitizer is a remarkable feature that is part of the gcc and clang compilers. It can be used to find many typical C bugs - invalid memory reads and writes, use after free errors etc. - while running applications. It has found countless bugs in many software packages. I'm often surprised that many people in the free software community seem to be unaware of this powerful tool.

Address Sanitizer is mainly intended to be a debugging tool. It is usually used to test single applications, often in combination with fuzzing. But as Address Sanitizer can prevent many typical C security bugs - why not use it in production? It doesn't come for free. Address Sanitizer takes significantly more memory and slows down applications by 50 - 100 %. But for some security sensitive applications this may be a reasonable trade-off. The Tor project is already experimenting with this with its Hardened Tor Browser.

One project I've been working on in the past months is to allow a Gentoo system to be compiled with Address Sanitizer. Today I'm publishing this and want to allow others to test it. I have created a page in the Gentoo Wiki that should become the central documentation hub for this project. I published an overlay with several fixes and quirks on Github.

I see this work as part of my Fuzzing Project. (I'm posting it here because the Gentoo category of my personal blog gets indexed by Planet Gentoo.)

I am not sure if using Gentoo with Address Sanitizer is reasonable for a production system. One thing that makes me uneasy in suggesting this for high security requirements is that it's currently incompatible with Grsecurity. But just creating this project already caused me to find a whole number of bugs in several applications. Some notable examples include Coreutils/shred, Bash ([2], [3]), man-db, Pidgin-OTR, Courier, Syslog-NG, Screen, Claws-Mail ([2], [3]), ProFTPD ([2], [3]) ICU, TCL ([2]), Dovecot. I think it was worth the effort.

I will present this work in a talk at FOSDEM in Brussels this Saturday, 14:00, in the Security Devroom.

Address Sanitizer is a remarkable feature that is part of the gcc and clang compilers. It can be used to find many typical C bugs - invalid memory reads and writes, use after free errors etc. - while running applications. It has found countless bugs in many software packages. I'm often surprised that many people in the free software community seem to be unaware of this powerful tool.

Address Sanitizer is a remarkable feature that is part of the gcc and clang compilers. It can be used to find many typical C bugs - invalid memory reads and writes, use after free errors etc. - while running applications. It has found countless bugs in many software packages. I'm often surprised that many people in the free software community seem to be unaware of this powerful tool.Address Sanitizer is mainly intended to be a debugging tool. It is usually used to test single applications, often in combination with fuzzing. But as Address Sanitizer can prevent many typical C security bugs - why not use it in production? It doesn't come for free. Address Sanitizer takes significantly more memory and slows down applications by 50 - 100 %. But for some security sensitive applications this may be a reasonable trade-off. The Tor project is already experimenting with this with its Hardened Tor Browser.

One project I've been working on in the past months is to allow a Gentoo system to be compiled with Address Sanitizer. Today I'm publishing this and want to allow others to test it. I have created a page in the Gentoo Wiki that should become the central documentation hub for this project. I published an overlay with several fixes and quirks on Github.

I see this work as part of my Fuzzing Project. (I'm posting it here because the Gentoo category of my personal blog gets indexed by Planet Gentoo.)

I am not sure if using Gentoo with Address Sanitizer is reasonable for a production system. One thing that makes me uneasy in suggesting this for high security requirements is that it's currently incompatible with Grsecurity. But just creating this project already caused me to find a whole number of bugs in several applications. Some notable examples include Coreutils/shred, Bash ([2], [3]), man-db, Pidgin-OTR, Courier, Syslog-NG, Screen, Claws-Mail ([2], [3]), ProFTPD ([2], [3]) ICU, TCL ([2]), Dovecot. I think it was worth the effort.

I will present this work in a talk at FOSDEM in Brussels this Saturday, 14:00, in the Security Devroom.

Posted by Hanno Böck

in Code, English, Gentoo, Linux, Security

at

01:40

| Comments (5)

| Trackbacks (0)

Defined tags for this entry: addresssanitizer, asan, bufferoverflow, c, clang, gcc, gentoo, linux, memorysafety, security, useafterfree

Tuesday, June 23. 2015

The tricky security issue with FollowSymLinks and Apache

tl;dr Most servers running a multi-user webhosting setup with Apache HTTPD probably have a security problem. Unless you're using Grsecurity there is no easy fix.

I am part of a small webhosting business that I run as a side project since quite a while. We offer customers user accounts on our servers running Gentoo Linux and webspace with the typical Apache/PHP/MySQL combination. We recently became aware of a security problem regarding Symlinks. I wanted to share this, because I was appalled by the fact that there was no obvious solution.

Apache has an option FollowSymLinks which basically does what it says. If a symlink in a webroot is accessed the webserver will follow it. In a multi-user setup this is a security problem. Here's why: If I know that another user on the same system is running a typical web application - let's say Wordpress - I can create a symlink to his config file (for Wordpress that's wp-config.php). I can't see this file with my own user account. But the webserver can see it, so I can access it with the browser over my own webpage. As I'm usually allowed to disable PHP I'm able to prevent the server from interpreting the file, so I can read the other user's database credentials. The webserver needs to be able to see all files, therefore this works. While PHP and CGI scripts usually run with user's rights (at least if the server is properly configured) the files are still read by the webserver. For this to work I need to guess the path and name of the file I want to read, but that's often trivial. In our case we have default paths in the form /home/[username]/websites/[hostname]/htdocs where webpages are located.

So the obvious solution one might think about is to disable the FollowSymLinks option and forbid users to set it themselves. However symlinks in web applications are pretty common and many will break if you do that. It's not feasible for a common webhosting server.

Apache supports another Option called SymLinksIfOwnerMatch. It's also pretty self-explanatory, it will only follow symlinks if they belong to the same user. That sounds like it solves our problem. However there are two catches: First of all the Apache documentation itself says that "this option should not be considered a security restriction". It is still vulnerable to race conditions.

But even leaving the race condition aside it doesn't really work. Web applications using symlinks will usually try to set FollowSymLinks in their .htaccess file. An example is Drupal which by default comes with such an .htaccess file. If you forbid users to set FollowSymLinks then the option won't be just ignored, the whole webpage won't run and will just return an error 500. What you could do is changing the FollowSymLinks option in the .htaccess manually to SymlinksIfOwnerMatch. While this may be feasible in some cases, if you consider that you have a lot of users you don't want to explain to all of them that in case they want to install some common web application they have to manually edit some file they don't understand. (There's a bug report for Drupal asking to change FollowSymLinks to SymlinksIfOwnerMatch, but it's been ignored since several years.)

So using SymLinksIfOwnerMatch is neither secure nor really feasible. The documentation for Cpanel discusses several possible solutions. The recommended solutions require proprietary modules. None of the proposed fixes work with a plain Apache setup, which I think is a pretty dismal situation. The most common web server has a severe security weakness in a very common situation and no usable solution for it.

The one solution that we chose is a feature of Grsecurity. Grsecurity is a Linux kernel patch that greatly enhances security and we've been very happy with it in the past. There are a lot of reasons to use this patch, I'm often impressed that local root exploits very often don't work on a Grsecurity system.

Grsecurity has an option like SymlinksIfOwnerMatch (CONFIG_GRKERNSEC_SYMLINKOWN) that operates on the kernel level. You can define a certain user group (which in our case is the "apache" group) for which this option will be enabled. For us this was the best solution, as it required very little change.

I haven't checked this, but I'm pretty sure that we were not alone with this problem. I'd guess that a lot of shared web hosting companies are vulnerable to this problem.

Here's the German blog post on our webpage and here's the original blogpost from an administrator at Uberspace (also German) which made us aware of this issue.

I am part of a small webhosting business that I run as a side project since quite a while. We offer customers user accounts on our servers running Gentoo Linux and webspace with the typical Apache/PHP/MySQL combination. We recently became aware of a security problem regarding Symlinks. I wanted to share this, because I was appalled by the fact that there was no obvious solution.

Apache has an option FollowSymLinks which basically does what it says. If a symlink in a webroot is accessed the webserver will follow it. In a multi-user setup this is a security problem. Here's why: If I know that another user on the same system is running a typical web application - let's say Wordpress - I can create a symlink to his config file (for Wordpress that's wp-config.php). I can't see this file with my own user account. But the webserver can see it, so I can access it with the browser over my own webpage. As I'm usually allowed to disable PHP I'm able to prevent the server from interpreting the file, so I can read the other user's database credentials. The webserver needs to be able to see all files, therefore this works. While PHP and CGI scripts usually run with user's rights (at least if the server is properly configured) the files are still read by the webserver. For this to work I need to guess the path and name of the file I want to read, but that's often trivial. In our case we have default paths in the form /home/[username]/websites/[hostname]/htdocs where webpages are located.

So the obvious solution one might think about is to disable the FollowSymLinks option and forbid users to set it themselves. However symlinks in web applications are pretty common and many will break if you do that. It's not feasible for a common webhosting server.

Apache supports another Option called SymLinksIfOwnerMatch. It's also pretty self-explanatory, it will only follow symlinks if they belong to the same user. That sounds like it solves our problem. However there are two catches: First of all the Apache documentation itself says that "this option should not be considered a security restriction". It is still vulnerable to race conditions.

But even leaving the race condition aside it doesn't really work. Web applications using symlinks will usually try to set FollowSymLinks in their .htaccess file. An example is Drupal which by default comes with such an .htaccess file. If you forbid users to set FollowSymLinks then the option won't be just ignored, the whole webpage won't run and will just return an error 500. What you could do is changing the FollowSymLinks option in the .htaccess manually to SymlinksIfOwnerMatch. While this may be feasible in some cases, if you consider that you have a lot of users you don't want to explain to all of them that in case they want to install some common web application they have to manually edit some file they don't understand. (There's a bug report for Drupal asking to change FollowSymLinks to SymlinksIfOwnerMatch, but it's been ignored since several years.)

So using SymLinksIfOwnerMatch is neither secure nor really feasible. The documentation for Cpanel discusses several possible solutions. The recommended solutions require proprietary modules. None of the proposed fixes work with a plain Apache setup, which I think is a pretty dismal situation. The most common web server has a severe security weakness in a very common situation and no usable solution for it.

The one solution that we chose is a feature of Grsecurity. Grsecurity is a Linux kernel patch that greatly enhances security and we've been very happy with it in the past. There are a lot of reasons to use this patch, I'm often impressed that local root exploits very often don't work on a Grsecurity system.

Grsecurity has an option like SymlinksIfOwnerMatch (CONFIG_GRKERNSEC_SYMLINKOWN) that operates on the kernel level. You can define a certain user group (which in our case is the "apache" group) for which this option will be enabled. For us this was the best solution, as it required very little change.

I haven't checked this, but I'm pretty sure that we were not alone with this problem. I'd guess that a lot of shared web hosting companies are vulnerable to this problem.

Here's the German blog post on our webpage and here's the original blogpost from an administrator at Uberspace (also German) which made us aware of this issue.

Tuesday, April 7. 2015

How Heartbleed could've been found

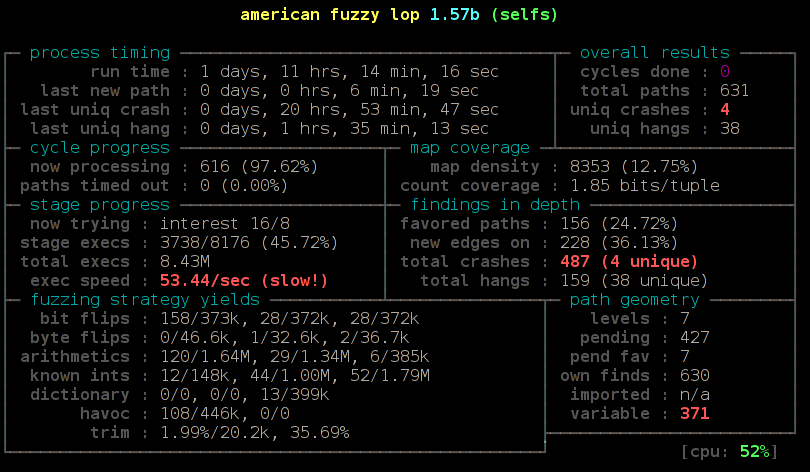

tl;dr With a reasonably simple fuzzing setup I was able to rediscover the Heartbleed bug. This uses state-of-the-art fuzzing and memory protection technology (american fuzzy lop and Address Sanitizer), but it doesn't require any prior knowledge about specifics of the Heartbleed bug or the TLS Heartbeat extension. We can learn from this to find similar bugs in the future.

tl;dr With a reasonably simple fuzzing setup I was able to rediscover the Heartbleed bug. This uses state-of-the-art fuzzing and memory protection technology (american fuzzy lop and Address Sanitizer), but it doesn't require any prior knowledge about specifics of the Heartbleed bug or the TLS Heartbeat extension. We can learn from this to find similar bugs in the future.Exactly one year ago a bug in the OpenSSL library became public that is one of the most well-known security bug of all time: Heartbleed. It is a bug in the code of a TLS extension that up until then was rarely known by anybody. A read buffer overflow allowed an attacker to extract parts of the memory of every server using OpenSSL.

Can we find Heartbleed with fuzzing?

Heartbleed was introduced in OpenSSL 1.0.1, which was released in March 2012, two years earlier. Many people wondered how it could've been hidden there for so long. David A. Wheeler wrote an essay discussing how fuzzing and memory protection technologies could've detected Heartbleed. It covers many aspects in detail, but in the end he only offers speculation on whether or not fuzzing would have found Heartbleed. So I wanted to try it out.

Of course it is easy to find a bug if you know what you're looking for. As best as reasonably possible I tried not to use any specific information I had about Heartbleed. I created a setup that's reasonably simple and similar to what someone would also try it without knowing anything about the specifics of Heartbleed.

Heartbleed is a read buffer overflow. What that means is that an application is reading outside the boundaries of a buffer. For example, imagine an application has a space in memory that's 10 bytes long. If the software tries to read 20 bytes from that buffer, you have a read buffer overflow. It will read whatever is in the memory located after the 10 bytes. These bugs are fairly common and the basic concept of exploiting buffer overflows is pretty old. Just to give you an idea how old: Recently the Chaos Computer Club celebrated the 30th anniversary of a hack of the German BtX-System, an early online service. They used a buffer overflow that was in many aspects very similar to the Heartbleed bug. (It is actually disputed if this is really what happened, but it seems reasonably plausible to me.)

Fuzzing is a widely used strategy to find security issues and bugs in software. The basic idea is simple: Give the software lots of inputs with small errors and see what happens. If the software crashes you likely found a bug.

When buffer overflows happen an application doesn't always crash. Often it will just read (or write if it is a write overflow) to the memory that happens to be there. Whether it crashes depends on a lot of circumstances. Most of the time read overflows won't crash your application. That's also the case with Heartbleed. There are a couple of technologies that improve the detection of memory access errors like buffer overflows. An old and well-known one is the debugging tool Valgrind. However Valgrind slows down applications a lot (around 20 times slower), so it is not really well suited for fuzzing, where you want to run an application millions of times on different inputs.

Address Sanitizer finds more bug

A better tool for our purpose is Address Sanitizer. David A. Wheeler calls it “nothing short of amazing”, and I want to reiterate that. I think it should be a tool that every C/C++ software developer should know and should use for testing.

Address Sanitizer is part of the C compiler and has been included into the two most common compilers in the free software world, gcc and llvm. To use Address Sanitizer one has to recompile the software with the command line parameter -fsanitize=address . It slows down applications, but only by a relatively small amount. According to their own numbers an application using Address Sanitizer is around 1.8 times slower. This makes it feasible for fuzzing tasks.

For the fuzzing itself a tool that recently gained a lot of popularity is american fuzzy lop (afl). This was developed by Michal Zalewski from the Google security team, who is also known by his nick name lcamtuf. As far as I'm aware the approach of afl is unique. It adds instructions to an application during the compilation that allow the fuzzer to detect new code paths while running the fuzzing tasks. If a new interesting code path is found then the sample that created this code path is used as the starting point for further fuzzing.

Currently afl only uses file inputs and cannot directly fuzz network input. OpenSSL has a command line tool that allows all kinds of file inputs, so you can use it for example to fuzz the certificate parser. But this approach does not allow us to directly fuzz the TLS connection, because that only happens on the network layer. By fuzzing various file inputs I recently found two issues in OpenSSL, but both had been found by Brian Carpenter before, who at the same time was also fuzzing OpenSSL.

Let OpenSSL talk to itself

So to fuzz the TLS network connection I had to create a workaround. I wrote a small application that creates two instances of OpenSSL that talk to each other. This application doesn't do any real networking, it is just passing buffers back and forth and thus doing a TLS handshake between a server and a client. Each message packet is written down to a file. It will result in six files, but the last two are just empty, because at that point the handshake is finished and no more data is transmitted. So we have four files that contain actual data from a TLS handshake. If you want to dig into this, a good description of a TLS handshake is provided by the developers of OCaml-TLS and MirageOS.

Then I added the possibility of switching out parts of the handshake messages by files I pass on the command line. By calling my test application selftls with a number and a filename a handshake message gets replaced by this file. So to test just the first part of the server handshake I'd call the test application, take the output file packed-1 and pass it back again to the application by running selftls 1 packet-1. Now we have all the pieces we need to use american fuzzy lop and fuzz the TLS handshake.

I compiled OpenSSL 1.0.1f, the last version that was vulnerable to Heartbleed, with american fuzzy lop. This can be done by calling ./config and then replacing gcc in the Makefile with afl-gcc. Also we want to use Address Sanitizer, to do so we have to set the environment variable AFL_USE_ASAN to 1.

There are some issues when using Address Sanitizer with american fuzzy lop. Address Sanitizer needs a lot of virtual memory (many Terabytes). American fuzzy lop limits the amount of memory an application may use. It is not trivially possible to only limit the real amount of memory an application uses and not the virtual amount, therefore american fuzzy lop cannot handle this flawlessly. Different solutions for this problem have been proposed and are currently developed. I usually go with the simplest solution: I just disable the memory limit of afl (parameter -m -1). This poses a small risk: A fuzzed input may lead an application to a state where it will use all available memory and thereby will cause other applications on the same system to malfuction. Based on my experience this is very rare, so I usually just ignore that potential problem.

After having compiled OpenSSL 1.0.1f we have two files libssl.a and libcrypto.a. These are static versions of OpenSSL and we will use them for our test application. We now also use the afl-gcc to compile our test application:

AFL_USE_ASAN=1 afl-gcc selftls.c -o selftls libssl.a libcrypto.a -ldl

Now we run the application. It needs a dummy certificate. I have put one in the repo. To make things faster I'm using a 512 bit RSA key. This is completely insecure, but as we don't want any security here – we just want to find bugs – this is fine, because a smaller key makes things faster. However if you want to try fuzzing the latest OpenSSL development code you need to create a larger key, because it'll refuse to accept such small keys.

The application will give us six packet files, however the last two will be empty. We only want to fuzz the very first step of the handshake, so we're interested in the first packet. We will create an input directory for american fuzzy lop called in and place packet-1 in it. Then we can run our fuzzing job:

afl-fuzz -i in -o out -m -1 -t 5000 ./selftls 1 @@

We pass the input and output directory, disable the memory limit and increase the timeout value, because TLS handshakes are slower than common fuzzing tasks. On my test machine around 6 hours later afl found the first crash. Now we can manually pass our output to the test application and will get a stack trace by Address Sanitizer:

==2268==ERROR: AddressSanitizer: heap-buffer-overflow on address 0x629000013748 at pc 0x7f228f5f0cfa bp 0x7fffe8dbd590 sp 0x7fffe8dbcd38

READ of size 32768 at 0x629000013748 thread T0

#0 0x7f228f5f0cf9 (/usr/lib/gcc/x86_64-pc-linux-gnu/4.9.2/libasan.so.1+0x2fcf9)

#1 0x43d075 in memcpy /usr/include/bits/string3.h:51

#2 0x43d075 in tls1_process_heartbeat /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/ssl/t1_lib.c:2586

#3 0x50e498 in ssl3_read_bytes /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/ssl/s3_pkt.c:1092

#4 0x51895c in ssl3_get_message /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/ssl/s3_both.c:457

#5 0x4ad90b in ssl3_get_client_hello /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/ssl/s3_srvr.c:941

#6 0x4c831a in ssl3_accept /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/ssl/s3_srvr.c:357

#7 0x412431 in main /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/selfs.c:85

#8 0x7f228f03ff9f in __libc_start_main (/lib64/libc.so.6+0x1ff9f)

#9 0x4252a1 (/data/openssl/openssl-handshake/openssl-1.0.1f-nobreakrng-afl-asan-fuzz/selfs+0x4252a1)

0x629000013748 is located 0 bytes to the right of 17736-byte region [0x62900000f200,0x629000013748)

allocated by thread T0 here:

#0 0x7f228f6186f7 in malloc (/usr/lib/gcc/x86_64-pc-linux-gnu/4.9.2/libasan.so.1+0x576f7)

#1 0x57f026 in CRYPTO_malloc /home/hanno/code/openssl-fuzz/tests/openssl-1.0.1f/crypto/mem.c:308

We can see here that the crash is a heap buffer overflow doing an invalid read access of around 32 Kilobytes in the function tls1_process_heartbeat(). It is the Heartbleed bug. We found it.

I want to mention a couple of things that I found out while trying this. I did some things that I thought were necessary, but later it turned out that they weren't. After Heartbleed broke the news a number of reports stated that Heartbleed was partly the fault of OpenSSL's memory management. A mail by Theo De Raadt claiming that OpenSSL has “exploit mitigation countermeasures” was widely quoted. I was aware of that, so I first tried to compile OpenSSL without its own memory management. That can be done by calling ./config with the option no-buf-freelist.

But it turns out although OpenSSL uses its own memory management that doesn't defeat Address Sanitizer. I could replicate my fuzzing finding with OpenSSL compiled with its default options. Although it does its own allocation management, it will still do a call to the system's normal malloc() function for every new memory allocation. A blog post by Chris Rohlf digs into the details of the OpenSSL memory allocator.

Breaking random numbers for deterministic behaviour

When fuzzing the TLS handshake american fuzzy lop will report a red number counting variable runs of the application. The reason for that is that a TLS handshake uses random numbers to create the master secret that's later used to derive cryptographic keys. Also the RSA functions will use random numbers. I wrote a patch to OpenSSL to deliberately break the random number generator and let it only output ones (it didn't work with zeros, because OpenSSL will wait for non-zero random numbers in the RSA function).

During my tests this had no noticeable impact on the time it took afl to find Heartbleed. Still I think it is a good idea to remove nondeterministic behavior when fuzzing cryptographic applications. Later in the handshake there are also timestamps used, this can be circumvented with libfaketime, but for the initial handshake processing that I fuzzed to find Heartbleed that doesn't matter.

Conclusion

You may ask now what the point of all this is. Of course we already know where Heartbleed is, it has been patched, fixes have been deployed and it is mostly history. It's been analyzed thoroughly.

The question has been asked if Heartbleed could've been found by fuzzing. I'm confident to say the answer is yes. One thing I should mention here however: American fuzzy lop was already available back then, but it was barely known. It only received major attention later in 2014, after Michal Zalewski used it to find two variants of the Shellshock bug. Earlier versions of afl were much less handy to use, e. g. they didn't have 64 bit support out of the box. I remember that I failed to use an earlier version of afl with Address Sanitizer, it was only possible after a couple of issues were fixed. A lot of other things have been improved in afl, so at the time Heartbleed was found american fuzzy lop probably wasn't in a state that would've allowed to find it in an easy, straightforward way.

I think the takeaway message is this: We have powerful tools freely available that are capable of finding bugs like Heartbleed. We should use them and look for the other Heartbleeds that are still lingering in our software. Take a look at the Fuzzing Project if you're interested in further fuzzing work. There are beginner tutorials that I wrote with the idea in mind to show people that fuzzing is an easy way to find bugs and improve software quality.

I already used my sample application to fuzz the latest OpenSSL code. Nothing was found yet, but of course this could be further tweaked by trying different protocol versions, extensions and other variations in the handshake.

I also wrote a German article about this finding for the IT news webpage Golem.de.

Update:

I want to point out some feedback I got that I think is noteworthy.

On Twitter it was mentioned that Codenomicon actually found Heartbleed via fuzzing. There's a Youtube video from Codenomicon's Antti Karjalainen explaining the details. However the way they did this was quite different, they built a protocol specific fuzzer. The remarkable feature of afl is that it is very powerful without knowing anything specific about the used protocol. Also it should be noted that Heartbleed was found twice, the first one was Neel Mehta from Google.

Kostya Serebryany mailed me that he was able to replicate my findings with his own fuzzer which is part of LLVM, and it was even faster.

In the comments Michele Spagnuolo mentions that by compiling OpenSSL with -DOPENSSL_TLS_SECURITY_LEVEL=0 one can use very short and insecure RSA keys even in the latest version. Of course this shouldn't be done in production, but it is helpful for fuzzing and other testing efforts.

Posted by Hanno Böck

in Code, Cryptography, English, Gentoo, Linux, Security

at

15:23

| Comments (3)

| Trackbacks (4)

Defined tags for this entry: addresssanitizer, afl, americanfuzzylop, bufferoverflow, fuzzing, heartbleed, openssl

Sunday, March 15. 2015

Talks at BSidesHN about PGP keyserver data and at Easterhegg about TLS

Just wanted to quickly announce two talks I'll give in the upcoming weeks: One at BSidesHN (Hannover, 20th March) about some findings related to PGP and keyservers and one at the Easterhegg (Braunschweig, 4th April) about the current state of TLS.

A look at the PGP ecosystem and its keys

PGP-based e-mail encryption is widely regarded as an important tool to provide confidential and secure communication. The PGP ecosystem consists of the OpenPGP standard, different implementations (mostly GnuPG and the original PGP) and keyservers.

The PGP keyservers operate on an add-only basis. That means keys can only be uploaded and never removed. We can use these keyservers as a tool to investigate potential problems in the cryptography of PGP-implementations. Similar projects regarding TLS and HTTPS have uncovered a large number of issues in the past.

The talk will present a tool to parse the data of PGP keyservers and put them into a database. It will then have a look at potential cryptographic problems. The tools used will be published under a free license after the talk.

Update:

Source code

A look at the PGP ecosystem through the key server data (background paper)

Slides

Some tales from TLS

The TLS protocol is one of the foundations of Internet security. In recent years it's been under attack: Various vulnerabilities, both in the protocol itself and in popular implementations, showed how fragile that foundation is.

On the other hand new features allow to use TLS in a much more secure way these days than ever before. Features like Certificate Transparency and HTTP Public Key Pinning allow us to avoid many of the security pitfals of the Certificate Authority system.

Update: Slides and video available. Bonus: Contains rant about DNSSEC/DANE.

Slides PDF, LaTeX, Slideshare

Video recording, also on Youtube

A look at the PGP ecosystem and its keys

PGP-based e-mail encryption is widely regarded as an important tool to provide confidential and secure communication. The PGP ecosystem consists of the OpenPGP standard, different implementations (mostly GnuPG and the original PGP) and keyservers.

The PGP keyservers operate on an add-only basis. That means keys can only be uploaded and never removed. We can use these keyservers as a tool to investigate potential problems in the cryptography of PGP-implementations. Similar projects regarding TLS and HTTPS have uncovered a large number of issues in the past.

The talk will present a tool to parse the data of PGP keyservers and put them into a database. It will then have a look at potential cryptographic problems. The tools used will be published under a free license after the talk.

Update:

Source code

A look at the PGP ecosystem through the key server data (background paper)

Slides

Some tales from TLS

The TLS protocol is one of the foundations of Internet security. In recent years it's been under attack: Various vulnerabilities, both in the protocol itself and in popular implementations, showed how fragile that foundation is.

On the other hand new features allow to use TLS in a much more secure way these days than ever before. Features like Certificate Transparency and HTTP Public Key Pinning allow us to avoid many of the security pitfals of the Certificate Authority system.

Update: Slides and video available. Bonus: Contains rant about DNSSEC/DANE.

Slides PDF, LaTeX, Slideshare

Video recording, also on Youtube

Posted by Hanno Böck

in Cryptography, English, Gentoo, Life, Linux, Security

at

13:16

| Comments (0)

| Trackback (1)

Defined tags for this entry: braunschweig, bsideshn, ccc, cryptography, easterhegg, encryption, hannover, pgp, security, talk, tls

Friday, January 30. 2015

What the GHOST tells us about free software vulnerability management

GHOST itself is a Heap Overflow in the name resolution function of the Glibc. The Glibc is the standard C library on Linux systems, almost every software that runs on a Linux system uses it. It is somewhat unclear right now how serious GHOST really is. A lot of software uses the affected function gethostbyname(), but a lot of conditions have to be met to make this vulnerability exploitable. Right now the most relevant attack is against the mail server exim where Qualys has developed a working exploit which they plan to release soon. There have been speculations whether GHOST might be exploitable through Wordpress, which would make it much more serious.

Technically GHOST is a heap overflow, which is a very common bug in C programming. C is inherently prone to these kinds of memory corruption errors and there are essentially two things here to move forwards: Improve the use of exploit mitigation techniques like ASLR and create new ones (levee is an interesting project, watch this 31C3 talk). And if possible move away from C altogether and develop core components in memory safe languages (I have high hopes for the Mozilla Servo project, watch this linux.conf.au talk).

GHOST was discovered three times

But the thing I want to elaborate here is something different about GHOST: It turns out that it has been discovered independently three times. It was already fixed in 2013 in the Glibc Code itself. The commit message didn't indicate that it was a security vulnerability. Then in early 2014 developers at Google found it again using Address Sanitizer (which – by the way – tells you that all software developers should use Address Sanitizer more often to test their software). Google fixed it in Chrome OS and explicitly called it an overflow and a vulnerability. And then recently Qualys found it again and made it public.

Now you may wonder why a vulnerability fixed in 2013 made headlines in 2015. The reason is that it widely wasn't fixed because it wasn't publicly known that it was serious. I don't think there was any malicious intent. The original Glibc fix was probably done without anyone noticing that it is serious and the Google devs may have thought that the fix is already public, so they don't need to make any noise about it. But we can clearly see that something doesn't work here. Which brings us to a discussion how the Linux and free software world in general and vulnerability management in particular work.

The “Never touch a running system” principle

Quite early when I came in contact with computers I heard the phrase “Never touch a running system”. This may have been a reasonable approach to IT systems back then when computers usually weren't connected to any networks and when remote exploits weren't a thing, but it certainly isn't a good idea today in a world where almost every computer is part of the Internet. Because once new security vulnerabilities become public you should change your system and fix them. However that doesn't change the fact that many people still operate like that.

A number of Linux distributions provide “stable” or “Long Time Support” versions. Basically the idea is this: At some point they take the current state of their systems and further updates will only contain important fixes and security updates. They guarantee to fix security vulnerabilities for a certain time frame. This is kind of a compromise between the “Never touch a running system” approach and reasonable security. It tries to give you a system that will basically stay the same, but you get fixes for security issues. Popular examples for this approach are the stable branch of Debian, Ubuntu LTS versions and the Enterprise versions of Red Hat and SUSE.

To give you an idea about time frames, Debian currently supports the stable trees Squeeze (6.0) which was released 2011 and Wheezy (7.0) which was released 2013. Red Hat Enterprise Linux has currently 4 supported version (4, 5, 6, 7), the oldest one was originally released in 2005. So we're talking about pretty long time frames that these systems get supported. Ubuntu and Suse have similar long time supported Systems.

These systems are delivered with an implicit promise: We will take care of security and if you update regularly you'll have a system that doesn't change much, but that will be secure against know threats. Now the interesting question is: How well do these systems deliver on that promise and how hard is that?

Vulnerability management is chaotic and fragile

I'm not sure how many people are aware how vulnerability management works in the free software world. It is a pretty fragile and chaotic process. There is no standard way things work. The information is scattered around many different places. Different people look for vulnerabilities for different reasons. Some are developers of the respective projects themselves, some are companies like Google that make use of free software projects, some are just curious people interested in IT security or researchers. They report a bug through the channels of the respective project. That may be a mailing list, a bug tracker or just a direct mail to the developer. Hopefully the developers fix the issue. It does happen that the person finding the vulnerability first has to explain to the developer why it actually is a vulnerability. Sometimes the fix will happen in a public code repository, sometimes not. Sometimes the developer will mention that it is a vulnerability in the commit message or the release notes of the new version, sometimes not. There are notorious projects that refuse to handle security vulnerabilities in a transparent way. Sometimes whoever found the vulnerability will post more information on his/her blog or on a mailing list like full disclosure or oss-security. Sometimes not. Sometimes vulnerabilities get a CVE id assigned, sometimes not.

Add to that the fact that in many cases it's far from clear what is a security vulnerability. It is absolutely common that if you ask the people involved whether this is serious the best and most honest answer they can give is “we don't know”. And very often bugs get fixed without anyone noticing that it even could be a security vulnerability.

Then there are projects where the number of security vulnerabilities found and fixed is really huge. The latest Chrome 40 release had 62 security fixes, version 39 had 42. Chrome releases a new version every two months. Browser vulnerabilities are found and fixed on a daily basis. Not that extreme but still high is the vulnerability count in PHP, which is especially worrying if you know that many webhosting providers run PHP versions not supported any more.

So you probably see my point: There is a very chaotic stream of information in various different places about bugs and vulnerabilities in free software projects. The number of vulnerabilities is huge. Making a promise that you will scan all this information for security vulnerabilities and backport the patches to your operating system is a big promise. And I doubt anyone can fulfill that.

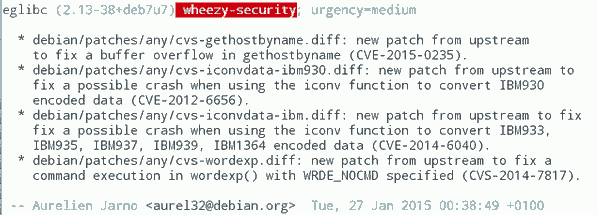

GHOST is a single example, so you might ask how often these things happen. At some point right after GHOST became public this excerpt from the Debian Glibc changelog caught my attention (excuse the bad quality, had to take the image from Twitter because I was unable to find that changelog on Debian's webpages):

What you can see here: While Debian fixed GHOST (which is CVE-2015-0235) they also fixed CVE-2012-6656 – a security issue from 2012. Admittedly this is a minor issue, but it's a vulnerability nevertheless. A quick look at the Debian changelog of Chromium both in squeeze and wheezy will tell you that they aren't fixing all the recent security issues in it. (Debian already had discussions about removing Chromium and in Wheezy they don't stick to a single version.)

It would be an interesting (and time consuming) project to take a package like PHP and check for all the security vulnerabilities whether they are fixed in the latest packages in Debian Squeeze/Wheezy, all Red Hat Enterprise versions and other long term support systems. PHP is probably more interesting than browsers, because the high profile targets for these vulnerabilities are servers. What worries me: I'm pretty sure some people already do that. They just won't tell you and me, instead they'll write their exploits and sell them to repressive governments or botnet operators.

Then there are also stories like this: Tavis Ormandy reported a security issue in Glibc in 2012 and the people from Google's Project Zero went to great lengths to show that it is actually exploitable. Reading the Glibc bug report you can learn that this was already reported in 2005(!), just nobody noticed back then that it was a security issue and it was minor enough that nobody cared to fix it.

There are also bugs that require changes so big that backporting them is essentially impossible. In the TLS world a lot of protocol bugs have been highlighted in recent years. Take Lucky Thirteen for example. It is a timing sidechannel in the way the TLS protocol combines the CBC encryption, padding and authentication. I like to mention this bug because I like to quote it as the TLS bug that was already mentioned in the specification (RFC 5246, page 23: "This leaves a small timing channel"). The real fix for Lucky Thirteen is not to use the erratic CBC mode any more and switch to authenticated encryption modes which are part of TLS 1.2. (There's another possible fix which is using Encrypt-then-MAC, but it is hardly deployed.) Up until recently most encryption libraries didn't support TLS 1.2. Debian Squeeze and Red Hat Enterprise 5 ship OpenSSL versions that only support TLS 1.0. There is no trivial patch that could be backported, because this is a huge change. What they likely backported are workarounds that avoid the timing channel. This will stop the attack, but it is not a very good fix, because it keeps the problematic old protocol and will force others to stay compatible with it.

LTS and stable distributions are there for a reason

The big question is of course what to do about it. OpenBSD developer Ted Unangst wrote a blog post yesterday titled Long term support considered harmful, I suggest you read it. He argues that we should get rid of long term support completely and urge users to upgrade more often. OpenBSD has a 6 month release cycle and supports two releases, so one version gets supported for one year.

Given what I wrote before you may think that I agree with him, but I don't. While I personally always avoided to use too old systems – I 'm usually using Gentoo which doesn't have any snapshot releases at all and does rolling releases – I can see the value in long term support releases. There are a lot of systems out there – connected to the Internet – that are never updated. Taking away the option to install systems and let them run with relatively little maintenance overhead over several years will probably result in more systems never receiving any security updates. With all its imperfectness running a Debian Squeeze with the latest updates is certainly better than running an operating system from 2011 that stopped getting security fixes in 2012.

Improving the information flow

I don't think there is a silver bullet solution, but I think there are things we can do to improve the situation. What could be done is to coordinate and share the work. Debian, Red Hat and other distributions with stable/LTS versions could agree that their next versions are based on a specific Glibc version and they collaboratively work on providing patch sets to fix all the vulnerabilities in it. This already somehow happens with upstream projects providing long term support versions, the Linux kernel does that for example. Doing that at scale would require vast organizational changes in the Linux distributions. They would have to agree on a roughly common timescale to start their stable versions.

What I'd consider the most crucial thing is to improve and streamline the information flow about vulnerabilities. When Google fixes a vulnerability in Chrome OS they should make sure this information is shared with other Linux distributions and the public. And they should know where and how they should share this information.

One mechanism that tries to organize the vulnerability process is the system of CVE ids. The idea is actually simple: Publicly known vulnerabilities get a fixed id and they are in a public database. GHOST is CVE-2015-0235 (the scheme will soon change because four digits aren't enough for all the vulnerabilities we find every year). I got my first CVEs assigned in 2007, so I have some experiences with the CVE system and they are rather mixed. Sometimes I briefly mention rather minor issues in a mailing list thread and a CVE gets assigned right away. Sometimes I explicitly ask for CVE assignments and never get an answer.

I would like to see that we just assign CVEs for everything that even remotely looks like a security vulnerability. However right now I think the process is to unreliable to deliver that. There are other public vulnerability databases like OSVDB, I have limited experience with them, so I can't judge if they'd be better suited. Unfortunately sometimes people hesitate to request CVE ids because others abuse the CVE system to count assigned CVEs and use this as a metric how secure a product is. Such bad statistics are outright dangerous, because it gives people an incentive to downplay vulnerabilities or withhold information about them.

This post was partly inspired by some discussions on oss-security

Posted by Hanno Böck

in Code, English, Gentoo, Linux, Security

at

00:52

| Comments (5)

| Trackbacks (0)

Saturday, December 20. 2014

Don't update NTP – stop using it

Update: This blogpost was written before NTS was available, and the information is outdated. If you are looking for a modern solution, I recommend using software and a time server with Network Time Security, as specified in RFC 8915.

tl;dr Several severe vulnerabilities have been found in the time setting software NTP. The Network Time Protocol is not secure anyway due to the lack of a secure authentication mechanism. Better use tlsdate.

tl;dr Several severe vulnerabilities have been found in the time setting software NTP. The Network Time Protocol is not secure anyway due to the lack of a secure authentication mechanism. Better use tlsdate.

Today several severe vulnerabilities in the NTP software were published. On Linux and other Unix systems running the NTP daemon is widespread, so this will likely cause some havoc. I wanted to take this opportunity to argue that I think that NTP has to die.

In the old times before we had the Internet our computers already had an internal clock. It was just up to us to make sure it shows the correct time. These days we have something much more convenient – and less secure. We can set our clocks through the Internet from time servers. This is usually done with NTP.

NTP is pretty old, it was developed in the 80s, Wikipedia says it's one of the oldest Internet protocols in use. The standard NTP protocol has no cryptography (that wasn't really common in the 80s). Anyone can tamper with your NTP requests and send you a wrong time. Is this a problem? It turns out it is. Modern TLS connections increasingly rely on the system time as a part of security concepts. This includes certificate expiration, OCSP revocation checks, HSTS and HPKP. All of these have security considerations that in one way or another expect the time of your system to be correct.

Practical attack against HSTS on Ubuntu

At the Black Hat Europe conference last October in Amsterdam there was a talk presenting a pretty neat attack against HSTS (the background paper is here, unfortunately there seems to be no video of the talk). HSTS is a protocol to prevent so-called SSL-Stripping-Attacks. What does that mean? In many cases a user goes to a web page without specifying the protocol, e. g. he might just type www.example.com in his browser or follow a link from another unencrypted page. To avoid attacks here a web page can signal the browser that it wants to be accessed exclusively through HTTPS for a defined amount of time. TLS security is just an example here, there are probably other security mechanisms that in some way rely on time.

Here's the catch: The defined amount of time depends on a correct time source. On some systems manipulating the time is as easy as running a man in the middle attack on NTP. At the Black Hat talk a live attack against an Ubuntu system was presented. He also published his NTP-MitM-tool called Delorean. Some systems don't allow arbitrary time jumps, so there the attack is not that easy. But the bottom line is: The system time can be important for application security, so it needs to be secure. NTP is not.

Now there is an authenticated version of NTP. It is rarely used, but there's another catch: It has been shown to be insecure and nobody has bothered to fix it yet. There is a pre-shared-key mode that is not completely insecure, but that is not really practical for widespread use. So authenticated NTP won't rescue us. The latest versions of Chrome shows warnings in some situations when a highly implausible time is detected. That's a good move, but it's not a replacement for a secure system time.

There is another problem with NTP and that's the fact that it's using UDP. It can be abused for reflection attacks. UDP has no way of checking that the sender address of a network package is the real sender. Therefore one can abuse UDP services to amplify Denial-of-Service-attacks if there are commands that have a larger reply. It was found that NTP has such a command called monlist that has a large amplification factor and it was widely enabled until recently. Amplification is also a big problem for DNS servers, but that's another toppic.

tlsdate can improve security

While there is no secure dedicated time setting protocol, there is an alternative: TLS. A TLS packet contains a timestamp and that can be used to set your system time. This is kind of a hack. You're taking another protocol that happens to contain information about the time. But it works very well, there's a tool called tlsdate together with a timesetting daemon tlsdated written by Jacob Appelbaum.

There are some potential problems to consider with tlsdate, but none of them is even closely as serious as the problems of NTP. Adam Langley mentions here that using TLS for time setting and verifying the TLS certificate with the current system time is a circularity. However this isn't a problem if the existing system time is at least remotely close to the real time. If using tlsdate gets widespread and people add random servers as their time source strange things may happen. Just imagine server operator A thinks server B is a good time source and server operator B thinks server A is a good time source. Unlikely, but could be a problem. tlsdate defaults to the PTB (Physikalisch-Technische Bundesanstalt) as its default time source, that's an organization running atomic clocks in Germany. I hope they set their server time from the atomic clocks, then everything is fine. Also an issue is that you're delegating your trust to a server operator. Depending on what your attack scenario is that might be a problem. However it is a huge improvement trusting one time source compared to having a completely insecure time source.

So the conclusion is obvious: NTP is insecure, you shouldn't use it. You should use tlsdate instead. Operating systems should replace ntpd or other NTP-based solutions with tlsdated (ChromeOS already does).

(I should point out that the authentication problems have nothing to do with the current vulnerabilities. These are buffer overflows and this can happen in every piece of software. Tlsdate seems pretty secure, it uses seccomp to make exploitability harder. But of course tlsdate can have security vulnerabilities, too.)

Update: Accuracy and TLS 1.3

This blog entry got much more publicity than I expected, I'd like to add a few comments on some feedback I got.

A number of people mentioned the lack of accuracy provided by tlsdate. The TLS timestamp is in seconds, adding some network latency you'll get a worst case inaccuracy of around 1 second, certainly less than two seconds. I can see that this is a problem for some special cases, however it's probably safe to say that for most average use cases an inaccuracy of less than two seconds does not matter. I'd prefer if we had a protocol that is both safe and as accurate as possible, but we don't. I think choosing the secure one is the better default choice.

Then some people pointed out that the timestamp of TLS will likely be removed in TLS 1.3. From a TLS perspective this makes sense. There are already TLS users that randomize the timestamp to avoid leaking the system time (e. g. tor). One of the biggest problems in TLS is that it is too complex so I think every change to remove unneccesary data is good.

For tlsdate this means very little in the short term. We're still struggling to get people to start using TLS 1.2. It will take a very long time until we can fully switch to TLS 1.3 (which will still take some time till it's ready). So for at least a couple of years tlsdate can be used with TLS 1.2.

I think both are valid points and they show that in the long term a better protocol would be desirable. Something like NTP, but with secure authentication. It should be possible to get both: Accuracy and security. With existing protocols and software we can only have either of these - and as said, I'd choose security by default.

I finally wanted to mention that the Linux Foundation is sponsoring some work to create a better NTP implementation and some code was just published. However it seems right now adding authentication to the NTP protocol is not part of their plans.

Update 2:

OpenBSD just came up with a pretty nice solution that combines the security of HTTPS and the accuracy of NTP by using an HTTPS connection to define boundaries for NTP timesetting.

tl;dr Several severe vulnerabilities have been found in the time setting software NTP. The Network Time Protocol is not secure anyway due to the lack of a secure authentication mechanism. Better use tlsdate.

tl;dr Several severe vulnerabilities have been found in the time setting software NTP. The Network Time Protocol is not secure anyway due to the lack of a secure authentication mechanism. Better use tlsdate.Today several severe vulnerabilities in the NTP software were published. On Linux and other Unix systems running the NTP daemon is widespread, so this will likely cause some havoc. I wanted to take this opportunity to argue that I think that NTP has to die.

In the old times before we had the Internet our computers already had an internal clock. It was just up to us to make sure it shows the correct time. These days we have something much more convenient – and less secure. We can set our clocks through the Internet from time servers. This is usually done with NTP.

NTP is pretty old, it was developed in the 80s, Wikipedia says it's one of the oldest Internet protocols in use. The standard NTP protocol has no cryptography (that wasn't really common in the 80s). Anyone can tamper with your NTP requests and send you a wrong time. Is this a problem? It turns out it is. Modern TLS connections increasingly rely on the system time as a part of security concepts. This includes certificate expiration, OCSP revocation checks, HSTS and HPKP. All of these have security considerations that in one way or another expect the time of your system to be correct.

Practical attack against HSTS on Ubuntu

At the Black Hat Europe conference last October in Amsterdam there was a talk presenting a pretty neat attack against HSTS (the background paper is here, unfortunately there seems to be no video of the talk). HSTS is a protocol to prevent so-called SSL-Stripping-Attacks. What does that mean? In many cases a user goes to a web page without specifying the protocol, e. g. he might just type www.example.com in his browser or follow a link from another unencrypted page. To avoid attacks here a web page can signal the browser that it wants to be accessed exclusively through HTTPS for a defined amount of time. TLS security is just an example here, there are probably other security mechanisms that in some way rely on time.

Here's the catch: The defined amount of time depends on a correct time source. On some systems manipulating the time is as easy as running a man in the middle attack on NTP. At the Black Hat talk a live attack against an Ubuntu system was presented. He also published his NTP-MitM-tool called Delorean. Some systems don't allow arbitrary time jumps, so there the attack is not that easy. But the bottom line is: The system time can be important for application security, so it needs to be secure. NTP is not.

Now there is an authenticated version of NTP. It is rarely used, but there's another catch: It has been shown to be insecure and nobody has bothered to fix it yet. There is a pre-shared-key mode that is not completely insecure, but that is not really practical for widespread use. So authenticated NTP won't rescue us. The latest versions of Chrome shows warnings in some situations when a highly implausible time is detected. That's a good move, but it's not a replacement for a secure system time.

There is another problem with NTP and that's the fact that it's using UDP. It can be abused for reflection attacks. UDP has no way of checking that the sender address of a network package is the real sender. Therefore one can abuse UDP services to amplify Denial-of-Service-attacks if there are commands that have a larger reply. It was found that NTP has such a command called monlist that has a large amplification factor and it was widely enabled until recently. Amplification is also a big problem for DNS servers, but that's another toppic.

tlsdate can improve security

While there is no secure dedicated time setting protocol, there is an alternative: TLS. A TLS packet contains a timestamp and that can be used to set your system time. This is kind of a hack. You're taking another protocol that happens to contain information about the time. But it works very well, there's a tool called tlsdate together with a timesetting daemon tlsdated written by Jacob Appelbaum.

There are some potential problems to consider with tlsdate, but none of them is even closely as serious as the problems of NTP. Adam Langley mentions here that using TLS for time setting and verifying the TLS certificate with the current system time is a circularity. However this isn't a problem if the existing system time is at least remotely close to the real time. If using tlsdate gets widespread and people add random servers as their time source strange things may happen. Just imagine server operator A thinks server B is a good time source and server operator B thinks server A is a good time source. Unlikely, but could be a problem. tlsdate defaults to the PTB (Physikalisch-Technische Bundesanstalt) as its default time source, that's an organization running atomic clocks in Germany. I hope they set their server time from the atomic clocks, then everything is fine. Also an issue is that you're delegating your trust to a server operator. Depending on what your attack scenario is that might be a problem. However it is a huge improvement trusting one time source compared to having a completely insecure time source.

So the conclusion is obvious: NTP is insecure, you shouldn't use it. You should use tlsdate instead. Operating systems should replace ntpd or other NTP-based solutions with tlsdated (ChromeOS already does).

(I should point out that the authentication problems have nothing to do with the current vulnerabilities. These are buffer overflows and this can happen in every piece of software. Tlsdate seems pretty secure, it uses seccomp to make exploitability harder. But of course tlsdate can have security vulnerabilities, too.)

Update: Accuracy and TLS 1.3

This blog entry got much more publicity than I expected, I'd like to add a few comments on some feedback I got.

A number of people mentioned the lack of accuracy provided by tlsdate. The TLS timestamp is in seconds, adding some network latency you'll get a worst case inaccuracy of around 1 second, certainly less than two seconds. I can see that this is a problem for some special cases, however it's probably safe to say that for most average use cases an inaccuracy of less than two seconds does not matter. I'd prefer if we had a protocol that is both safe and as accurate as possible, but we don't. I think choosing the secure one is the better default choice.

Then some people pointed out that the timestamp of TLS will likely be removed in TLS 1.3. From a TLS perspective this makes sense. There are already TLS users that randomize the timestamp to avoid leaking the system time (e. g. tor). One of the biggest problems in TLS is that it is too complex so I think every change to remove unneccesary data is good.

For tlsdate this means very little in the short term. We're still struggling to get people to start using TLS 1.2. It will take a very long time until we can fully switch to TLS 1.3 (which will still take some time till it's ready). So for at least a couple of years tlsdate can be used with TLS 1.2.

I think both are valid points and they show that in the long term a better protocol would be desirable. Something like NTP, but with secure authentication. It should be possible to get both: Accuracy and security. With existing protocols and software we can only have either of these - and as said, I'd choose security by default.

I finally wanted to mention that the Linux Foundation is sponsoring some work to create a better NTP implementation and some code was just published. However it seems right now adding authentication to the NTP protocol is not part of their plans.

Update 2:

OpenBSD just came up with a pretty nice solution that combines the security of HTTPS and the accuracy of NTP by using an HTTPS connection to define boundaries for NTP timesetting.

Posted by Hanno Böck

in Computer culture, English, Gentoo, Linux, Security

at