Tuesday, February 25. 2025

Mixing up Public and Private Keys in OpenID Connect deployments

OpenID Connect is a single sign-on protocol that allows web pages to offer logins via other services. Whenever you see a web page that offers logins via, e.g., your Google or Facebook account, the technology behind it is usually OpenID Connect.

An OpenID Provider like Google can publish a configuration file in JSON format for services interacting with it at a defined URL location of this form: https://example.com/.well-known/openid-configuration (Google's can be found here.)

Those configuration files contain a field "jwks_uri" pointing to a JSON Web Key Set (JWKS) containing cryptographic public keys used to verify authentication tokens. JSON Web Keys are a way to encode cryptographic keys in JSON format, and a JSON Web Key Set is a JSON structure containing multiple such keys. (You can find Google's here.)

Given that the OpenID configuration file is at a known location and references the public keys, that gives us an easy way to scan for such keys. By scanning the Tranco Top 1 Million list and extending the scan with hostnames from SSO-Monitor (a research project providing extensive data about single sign-on services), I identified around 13.000 hosts with a valid OpenID Connect configuration and corresponding JSON Web Key Sets.

Confusing Public and Private Keys

JSON Web Keys have a very peculiar property. Cryptographic public and private keys are, in essence, just some large numbers. For most algorithms, all the numbers of the public key are also contained in the private key. For JSON Web Keys, those numbers are encoded with urlsafe Base64 encoding. (In case you don't know what urlsafe Base64 means, don't worry, it's not important here.)

Here is an example of an ECDSA public key in JSON Web Key format:

{

"kty": "EC",

"crv": "P-256",

"x": "MKBCTNIcKUSDii11ySs3526iDZ8AiTo7Tu6KPAqv7D4",

"y": "4Etl6SRW2YiLUrN5vfvVHuhp7x8PxltmWWlbbM4IFyM"

}Here is the corresponding private key:

{

"kty": "EC",

"crv": "P-256",

"x": "MKBCTNIcKUSDii11ySs3526iDZ8AiTo7Tu6KPAqv7D4",

"y": "4Etl6SRW2YiLUrN5vfvVHuhp7x8PxltmWWlbbM4IFyM",

"d": "870MB6gfuTJ4HtUnUvYMyJpr5eUZNP4Bk43bVdj3eAE"

}You may notice that they look very similar. The only difference is that the private key contains one additional value called d, which, in the case of ECDSA, is the private key value. In the case of RSA, the private key contains multiple additional values (one of them also called "d"), but the general idea is the same: the private key is just the public key plus some extra values.

What is very unusual and something I have not seen in any other context is that the serialization format for public and private keys is the same. The only way to distinguish public and private keys is to check if there are private values. JSON is commonly interpreted in an extensible way, meaning that any additional fields in a JSON field are usually ignored if they have no meaning to the application reading a JSON file.

These two facts combined lead to an interesting and dangerous property. Using a private key instead of a public key will usually work, because every private key in JSON Web Key format is also a valid public key.

You can guess by now where this is going. I checked whether any of the collected public keys from OpenID configurations were actually private keys.

This was the case for 9 hosts. Those included host names belonging to some prominent companies, including stackoverflowteams.com, stack.uberinternal.com, and ask.fisglobal.com. Those three all appear to use a service provided by Stackoverflow, and have since been fixed. (A report to Uber's bug bounty program at HackerOne was closed as a duplicate for a report they said they cannot show me. The report to FIS Global was closed by Bugcrowd's triagers as not applicable, with a generic response containing some explanations about OpenID Connect that appeared to be entirely unrelated to my report. After I asked for an explanation, I was asked to provide a proof of concept after the issue was already fixed. Stack Overflow has no bug bounty program, but fixed it after a report to their security contact.)

Short RSA Keys

7 hosts had RSA keys with a key length of 512 bit configured. Such keys have long been known to be breakable, and today, doing so is possible with relatively little effort. 45 hosts had RSA keys with a length of 1024 bit, which is considered to be breakable by powerful attackers, although such an attack has not yet been publicly demonstrated.

The first successful public attack on RSA with 512 bit was performed in 1999. Back then, it required months on a supercomputer. Today, breaking such keys is accessible to practically everyone. An implementation of the best-known attack on RSA is available as an Open Source software called CADO-NFS. Ryan Castellucci recently ran such an attack for a 512-bit RSA key they found in the control software of a solar and battery storage system. They mentioned a price of $70 for cloud services to perform the attack in a few hours. Cracking an RSA-512 bit key is, therefore, not a significant hurdle for any determined attacker.

Using Example Keys in Production

Running badkeys on the found keys uncovered another type of vulnerability. Before running the scan, I ensured that badkeys would detect example private keys in common Open Source OpenID Connect implementations. I discovered 18 affected hosts with keys that were such "Public Private Keys," i.e., keys where the corresponding private key is part of an existing, publicly available software package.

I have reported all 512-bit RSA keys and uses of example keys to the affected parties. Most of them remain unfixed.

Impact

Overall, I discovered 33 vulnerable hosts. With 13,000 detected OpenID configurations total, 0.25% of those were vulnerable in a way that would allow an attacker to access the private key.

How severe is such a private key break? It depends. OpenID Connect supports different ways in which authentication tokens are exchanged between an OpenID Provider and a Consumer. The token can be exchanged via the browser, and in this case, it is most severe, as it simply allows an attacker to sign arbitrary login tokens for any identity.

The token can also be exchanged directly between the OpenID Provider and the Consumer. In this case, an attack is much less likely, as it would require a man-in-the-middle attack and an additional attack on the TLS connection between the two servers. I have not made any attempts to figure out which methods the affected hosts were using.

How to do better

I would argue that two of these issues could have been entirely prevented by better specifications.

As mentioned, it is a curious and unusual fact that JSON Web Keys use the same serialization format for public and private keys. It is a design decision that makes confusing public and private keys likely.

In an ecosystem where public and private keys are entirely different — like TLS or SSH — any attempt to configure a private key instead of a public key would immediately be noticed, as it would simply not work.

One mitigation that can be implemented within the existing specification is for OpenID Connect implementations to check whether a JSON Web Key Set contains any private keys. For all currently supported values, this can easily be done by checking the presence of a value "d". (Not sure if this is a coincidence, but for both RSA and ECDSA, we tend to call the private key "d".)

The current OpenID Connect Discovery specification says: "The JWK Set MUST NOT contain private or symmetric key values." Therefore, checking it would merely mean that an implementation is enforcing compliance with the existing specification. I would suggest adding a requirement for such a check to future versions of the standard.

Similarly, I recommend such a check for any other protocol or software utilizing JSON Web Keys or JSON Web Key Sets in places where only public keys are expected.

It is probably too late to change the JSON Web Key standard itself for existing algorithms. However, in the future, such scenarios could easily be avoided by slightly different specifications. Currently, the algorithm of a JSON Web Key is configured in the "kty" field and can have values like "RSA" or "EC".

Let's take one of the future post-quantum signature algorithms as an example. If we specify ML-DSA-65 (I know, not a name easy to remember), instead of defining a "kty" value (or, as they seem to do it these days, the "alg" value) of "ML-DSA-65", we could assign two values: "ML-DSA-65-Private" and "ML-DSA-65-Public".

When it comes to short RSA keys, it is surprising that 512-bit keys are even possible in these protocols. OpenID Connect and everything it is based on is relatively new. The first draft version of the JSON Web Key standard dates back to 2012 — thirteen years after the first successful attack on RSA-512. Warnings that RSA-1024 was potentially insecure date back to the early 2000s, and at the time all of this was specified, there were widely accepted recommendations for a minimal key size of 2048 bit.

All of this is to say that those protocols should have never supported such short RSA keys. Ideally, they should have never supported RSA with arbitrary key sizes, and just defined a few standard key sizes. (The RSA specification itself comes from a time when it was common to allow a lot of flexibility in cryptographic algorithms. While pretty much everyone uses RSA keys in standard sizes that are multiples of 1024, it is possible to even use key sizes that are not aligned on bytes — like 2049 bit. The same could be said about the RSA exponent, which everyone sets to 65537 these days. Making these things configurable provides no advantage and adds needless complexity.)

Preventing the use of known example keys — I like to call them Public Private Keys — is more difficult to avoid. We could reduce these problems if people agreed to use a standardized set of test keys. RFC 9500 contains some test keys for this exact use case. My recommendation is that any key used as an example in an RFC document is a good test key. While that would not prevent people from using those test keys in production, it would make it easier to detect such cases.

You can use badkeys to check existing deployments for several known vulnerabilities and known-compromised keys. I added a parameter --jwk that allows directly scanning files with JSON Web Keys or JSON Web Key Sets in the latest version of badkeys.

Thanks to Daniel Fett for the idea to scan OpenID Connect keys and for feedback on this issue. Thanks to Sebastian Pipping for valuable feedback for this blogpost.

Image source: SVG Repo/CC0

Posted by Hanno Böck

in Cryptography, English, Security

at

12:08

| Comment (1)

| Trackbacks (0)

Defined tags for this entry: cryptography, itsecurity, openid, openidconnect, security, sso, websecurity

Friday, January 17. 2025

Private Keys in the Fortigate Leak

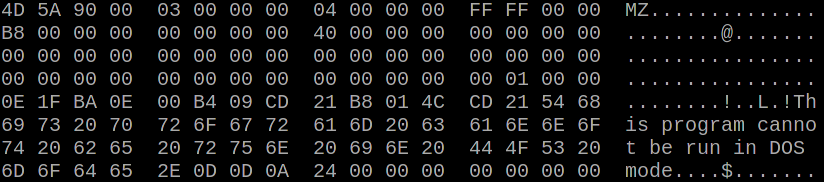

The leaked configurations contain keys looking like this (not an actual key from the leak):

set private-key "-----BEGIN ENCRYPTED PRIVATE KEY----- MIGjMF8GCSqGSIb3DQEFDTBSMDEGCSqGSIb3DQEFDDAkBBC5oRB/b9iViG5YoFmw 03T1AgIIADAMBggqhkiG9w0CCQUAMB0GCWCGSAFlAwQBKgQQPsMiXQoINAe2uBZX cbB1MARAXz1LwSJhElDvazEtfywe9pLSdCG9+A0G6CMk2Lp5eR954OjScEQY6Zqe 9J3V/fCQeDanrAE+/dpCefjAD4LhGg== -----END ENCRYPTED PRIVATE KEY-----"They also include corresponding certificates and keys in OpenSSH format. As you can see, these private keys are encrypted. However, above those keys, we can find the encryption password. The password is also encrypted, looking like this:

set password ENC SGFja1RoZVBsYW5ldCF[...]UA==A quick search for the encryption method turned up a script containing code to decrypt these passwords. The encryption key is static and publicly known.

The password line contains a Base64 string that decodes to 148 bytes. The first four bytes, padded with 12 zero bytes, are the initialization vector. The remaining bytes are the encrypted payload. The encryption uses AES-128 in CBC mode. The decrypted passwords appear to be mostly hex numbers and are padded with zero bytes - and sometimes other characters. (I am unaware of their meaning.)

In case I lost you here with technical details, the important takeaway is that in almost all cases, it is possible to decrypt the private key. (I may share a tool to extract the keys at a later point in time.)

The use of a static encryption key is a known vulnerability, tracked as CVE-2019-6693. According to Fortinet's advisory from 2020, this was "fixed" by introducing a setting that allows to configure a custom password.

"Fixing" default passwords by providing and documenting an option to change the password is something I have strong opinions about. It does not work (see also).

One result of this incident is that it gives us some real-world data on how many people will actually change their password if the option is given to them and documented in a security advisory. 99,5% of the keys could be decrypted with the default password. (Consider this as a lower bound and not an exact number. The password decryption may be flaky due to the unclear padding mechanism.)

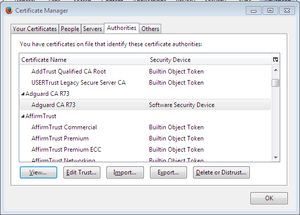

Overall, there were around 100,000 private keys in PKCS format and 60,000 in OpenSSH format. Most of the corresponding certificates were self-signed, but a few thousand were issued by publicly trusted web certificate authorities, most of them expired. However, the data included keys for 84 WebPKI certificates that were neither expired nor revoked as of this morning. Only 10 unexpired certificates were already revoked.

I have reported the still valid certificates to the responsible certificate authorities for revocation. CAs are obliged to revoke certificates with known-compromised keys within 24 hours. Therefore, these certificates will all be revoked soon. (I also filed two reports due to difficulties with the reporting process of some CAs.)

As mentioned above, it was already known in 2022 that this vulnerabiltiy was actively exploited. Yet, it appears that, overwhelmingly, this did not lead to people replacing their likely compromised private keys and revoking their certificates. While we cannot tell from this observation whether the same is true for passwords and other private keys not used in publicly trusted certificates, it appears likely.

Rant

It is an unfortunate reality that these days, security products often are themselves the source of security vulnerabilities. While I have no empirical evidence for this (and nobody else has the data, have I complained about this before?), I believe that we have entered a situation in recent years where security products turned from "mostly useless, sometimes harmful" to "almost certainly causing more security issues than they prevent". I have more thoughts on this that I may share at another time.

If you are wondering what to do, the solution is neither to patch and fix your Fortinet device nor to buy additional attack surface from one of its equally bad competitors. It is to stop believing that adding more attack surface will increase security.

Detecting affected keys

Detection for those keys has been added to badkeys. It is an open source tool, installable via Python's package management. You can use it to scan SSH host keys, certificates, or private keys. Make sure to update the badkeys blocklist (badkeys --update-bl) before doing so.

Please note that this detection is currently based on incomplete data and does not cover all keys in the leak. More updates with more complete coverage may come. Please also note that I have not made the private keys public. Unlike in other cases, this means badkeys cannot give you the private key. (I may decide to publish the keys at some point in the future.)

The badkeys blocklist uses a yet poorly documented format (it is on my todo list to improve this). I am sharing a list of SPKI SHA256 hashes of the affected keys to make it easier for others to write their own detection.

Update (2025-01-23): As mentioned, the original analysis was done with an incomplete set of the data. I later was able to access more complete data, increasing the number of keys to around 98,000 SSH keys and 167,000 PKCS keys. This also led to additional certificates reported for revocation. I have not kept track of the exact numbers. Therefore, the numbers above all refer to the incomplete dataset.

You can find a script to extract and decrypt passwords and private keys from Fortigate configuration files here.

Update (2025-01-24): During further analysis, I discovered that the dataset contained 314 private keys belonging to Let's Encrypt ACME accounts. I have now deactivated those accounts. Given that it has been over a week since the initial report of the incident, this adds to the impression that neither Fortinet itself nor their affected customers appear to have put any effort into cleaning up after this incident.

Update (2025-04-16): The keys.

Image source: paomedia / Public Domain

Posted by Hanno Böck

in Code, Cryptography, English, Security

at

17:04

| Comments (0)

| Trackbacks (0)

Monday, February 5. 2024

How to create a Secure, Random Password with JavaScript

Let's look at a few examples and learn how to create an actually secure password generation function. Our goal is to create a password from a defined set of characters with a fixed length. The password should be generated from a secure source of randomness, and it should have a uniform distribution, meaning every character should appear with the same likelihood. While the examples are JavaScript code, the principles can be used in any programming language.

One of the first examples to show up in Google is a blog post on the webpage dev.to. Here is the relevant part of the code:

/* Example with weak random number generator, DO NOT USE */

var chars = "0123456789abcdefghijklmnopqrstuvwxyz!@#$%^&*()ABCDEFGHIJKLMNOPQRSTUVWXYZ";

var passwordLength = 12;

var password = "";

for (var i = 0; i <= passwordLength; i++) {

var randomNumber = Math.floor(Math.random() * chars.length);

password += chars.substring(randomNumber, randomNumber +1);

}Math.random() does not provide cryptographically secure random numbers. Do not use them for anything related to security. Use the Web Crypto API instead, and more precisely, the window.crypto.getRandomValues() method.

This is pretty clear: We should not use Math.random() for security purposes, as it gives us no guarantees about the security of its output. This is not a merely theoretical concern: here is an example where someone used Math.random() to generate tokens and ended up seeing duplicate tokens in real-world use.

MDN tells us to use the getRandomValues() function of the Web Crypto API, which generates cryptographically strong random numbers.

We can make a more general statement here: Whenever we need randomness for security purposes, we should use a cryptographically secure random number generator. Even in non-security contexts, using secure random sources usually has no downsides. Theoretically, cryptographically strong random number generators can be slower, but unless you generate Gigabytes of random numbers, this is irrelevant. (I am not going to expand on how exactly cryptographically strong random number generators work, as this is something that should be done by the operating system. You can find a good introduction here.)

All modern operating systems have built-in functionality for this. Unfortunately, for historical reasons, in many programming languages, there are simple and more widely used random number generation functions that many people use, and APIs for secure random numbers often come with extra obstacles and may not always be available. However, in the case of Javascript, crypto.getRandomValues() has been available in all major browsers for over a decade.

After establishing that we should not use Math.random(), we may check whether searching specifically for that gives us a better answer. When we search for "Javascript random password without Math.Random()", the first result that shows up is titled "Never use Math.random() to create passwords in JavaScript". That sounds like a good start. Unfortunately, it makes another mistake. Here is the code it recommends:

/* Example with floating point rounding bias, DO NOT USE */

function generatePassword(length = 16)

{

let generatedPassword = "";

const validChars = "0123456789" +

"abcdefghijklmnopqrstuvwxyz" +

"ABCDEFGHIJKLMNOPQRSTUVWXYZ" +

",.-{}+!\"#$%/()=?";

for (let i = 0; i < length; i++) {

let randomNumber = crypto.getRandomValues(new Uint32Array(1))[0];

randomNumber = randomNumber / 0x100000000;

randomNumber = Math.floor(randomNumber * validChars.length);

generatedPassword += validChars[randomNumber];

}

return generatedPassword;

}The problem with this approach is that it uses floating-point numbers. It is generally a good idea to avoid floats in security and particularly cryptographic applications whenever possible. Floats introduce rounding errors, and due to the way they are stored, it is practically almost impossible to generate a uniform distribution. (See also this explanation in a StackExchange comment.)

Therefore, while this implementation is better than the first and probably "good enough" for random passwords, it is not ideal. It does not give us the best security we can have with a certain length and character choice of a password.

Another way of mapping a random integer number to an index for our list of characters is to use a random value modulo the size of our character class. Here is an example from a StackOverflow comment:

/* Example with modulo bias, DO NOT USE */

var generatePassword = (

length = 20,

characters = '0123456789ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz~!@-#$'

) =>

Array.from(crypto.getRandomValues(new Uint32Array(length)))

.map((x) => characters[x % characters.length])

.join('')The modulo bias in this example is quite small, so let's look at a different example. Assume we use letters and numbers (a-z, A-Z, 0-9, 62 characters total) and take a single byte (256 different values, 0-255) r from the random number generator. If we use the modulus r % 62, some characters are more likely to appear than others. The reason is that 256 is not a multiple of 62, so it is impossible to map our byte to this list of characters with a uniform distribution.

In our example, the lowercase "a" would be mapped to five values (0, 62, 124, 186, 248). The uppercase "A" would be mapped to only four values (26, 88, 150, 212). Some values have a higher probability than others.

(For more detailed explanations of a modulo bias, check this post by Yolan Romailler from Kudelski Security and this post from Sebastian Pipping.)

One way to avoid a modulo bias is to use rejection sampling. The idea is that you throw away the values that cause higher probabilities. In our example above, 248 and higher values cause the modulo bias, so if we generate such a value, we repeat the process. A piece of code to generate a single random character could look like this:

const pwchars = "abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVWXYZ0123456789";

const limit = 256 - (256 % pwchars.length);

do {

randval = window.crypto.getRandomValues(new Uint8Array(1))[0];

} while (randval >= limit);An alternative to rejection sampling is to make the modulo bias so small that it does not matter (by using a very large random value).

However, I find rejection sampling to be a much cleaner solution. If you argue that the modulo bias is so small that it does not matter, you must carefully analyze whether this is true. For a password, a small modulo bias may be okay. For cryptographic applications, things can be different. Rejection sampling avoids the modulo bias completely. Therefore, it is always a safe choice.

There are two things you might wonder about this approach. One is that it introduces a timing difference. In cases where the random number generator turns up multiple numbers in a row that are thrown away, the code runs a bit longer.

Timing differences can be a problem in security code, but this one is not. It does not reveal any information about the password because it is only influenced by values we throw away. Even if an attacker were able to measure the exact timing of our password generation, it would not give him any useful information. (This argument is however only true for a cryptographically secure random number generator. It assumes that the ignored random values do not reveal any information about the random number generator's internal state.)

Another issue with this approach is that it is not guaranteed to finish in any given time. Theoretically, the random number generator could produce numbers above our limit so often that the function stalls. However, the probability of that happening quickly becomes so low that it is irrelevant. Such code is generally so fast that even multiple rounds of rejection would not cause a noticeable delay.

To summarize: If we want to write a secure, random password generation function, we should consider three things: We should use a secure random number generation function. We should avoid floating point numbers. And we should avoid a modulo bias.

Taking this all together, here is a Javascript function that generates a 15-character password, composed of ASCII letters and numbers:

function simplesecpw() {

const pwlen = 15;

const pwchars = "abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVWXYZ0123456789";

const limit = 256 - (256 % pwchars.length);

let passwd = "";

let randval;

for (let i = 0; i < pwlen; i++) {

do {

randval = window.crypto.getRandomValues(new Uint8Array(1))[0];

} while (randval >= limit);

passwd += pwchars[randval % pwchars.length];

}

return passwd;

}If you want to use that code, feel free to do so. I have published it - and a slightly more configurable version that allows optionally setting the length and the set of characters - under a very permissive license (0BSD license).

An online demo generating a password with this code can be found at https://password.hboeck.de/. All code is available on GitHub.

Image Source: SVG Repo, CC0

Posted by Hanno Böck

in Code, Cryptography, English, Security, Webdesign

at

15:23

| Comments (0)

| Trackbacks (0)

Monday, April 13. 2020

Generating CRIME safe CSRF Tokens

For a small web project I recently had to consider how to generate secure tokens to prevent Cross Site Request Forgery (CSRF). I wanted to share how I think this should be done, primarily to get some feedback whether other people agree or see room for improvement.

For a small web project I recently had to consider how to generate secure tokens to prevent Cross Site Request Forgery (CSRF). I wanted to share how I think this should be done, primarily to get some feedback whether other people agree or see room for improvement.I am not going to discuss CSRF in general here, I will generally assume that you are aware of how this attack class works. The standard method to protect against CSRF is to add a token to every form that performs an action that is sufficiently random and unique for the session.

Some web applications use the same token for every request or at least the same token for every request of the same kind. However this is problematic due to some TLS attacks.

There are several attacks against TLS and HTTPS that work by generating a large number of requests and then slowly learning about a secret. A common target of such attacks are CSRF tokens. The list of these attacks is long: BEAST, all Padding Oracle attacks (Lucky Thirteen, POODLE, Zombie POODLE, GOLDENDOODLE), RC4 bias attacks and probably a few more that I have forgotten about. The good news is that none of these attacks should be a concern, because they all affect fragile cryptography that is no longer present in modern TLS stacks.

However there is a class of TLS attacks that is still a concern, because there is no good general fix, and these are compression based attacks. The first such attack that has been shown was called CRIME, which targeted TLS compression. TLS compression is no longer used, but a later attack called BREACH used HTTP compression, which is still widely in use and which nobody wants to disable, because HTML code compresses so well. Further improvements of these attacks are known as TIME and HEIST.

I am not going to discuss these attacks in detail, it is sufficient to know that they all rely on a secret being transmitted again and again over a connection. So CSRF tokens are vulnerable to this if they are the same over multiple connections. If we have an always changing CSRF token this attack does not apply to it.

An obvious fix for this is to always generate new CSRF tokens. However this requires quite a bit of state management on the server or other trade-offs, therefore I don’t think it’s desirable. Rather a good concept would be to keep a single server-side secret, but put some randomness in so the token changes on every request.

The BREACH authors have the following brief recommendation (thanks to Ivan Ristic for pointing this out): “Masking secrets (effectively randomizing by XORing with a random secret per request)”.

I read this as having a real token and a random value and the CSRF token would look like random_value + XOR(random_value, real_token). The server could verify this by splitting up the token, XORing the first half with the second and then comparing that to the real token.

However I would like to add something: I would prefer if a token used for one form and action cannot be used for another action. In case there is any form of token exfiltration it seems reasonable to limit the utility of the token as much as possible.

My idea is therefore to use a cryptographic hash function instead of XOR and add a scope string. This could be something like “adduser”, “addblogpost” etc., anything that identifies the action.

The server would keep a secret token per session on the server side and the CSRF token would look like this: random_value + hash(random_value + secret_token + scope). The random value changes each time the token is sent.

I have created some simple PHP code to implement this (if there is sufficient interest I will learn how to turn this into a composer package). The usage is very simple, there is one function to create a token that takes a scope string as the only parameter and another to check a token that takes the public token and the scope and returns true or false.

As for the implementation details I am using 256 bit random values and secret tokens, which is excessively too much and should avoid any discussion about them being too short. For the hash I am using sha364, which is widely supported and not vulnerable to length extension attacks. I do not see any reason why length extension attacks would be relevant here, but still this feels safer. I believe the order of the hash inputs should not matter, but I have seen constructions where having

The CSRF token is Base64-encoded, which should work fine in HTML forms.

My question would be if people think this is a sane design or if they see room for improvement. Also as this is all relatively straightforward and obvious, I am almost sure I am not the first person to invent this, pointers welcome.

Now there is an elephant in the room I also need to discuss. Form tokens are the traditional way to prevent CSRF attacks, but in recent years browsers have introduced a new and completely different way of preventing CSRF attacks called SameSite Cookies. The long term plan is to enable them by default, which would likely make CSRF almost impossible. (These plans have been delayed due to Covid-19 and there will probably be some unfortunate compatibility trade-offs that are reason enough to still set the flag manually in a SameSite-by-default world.)

Now there is an elephant in the room I also need to discuss. Form tokens are the traditional way to prevent CSRF attacks, but in recent years browsers have introduced a new and completely different way of preventing CSRF attacks called SameSite Cookies. The long term plan is to enable them by default, which would likely make CSRF almost impossible. (These plans have been delayed due to Covid-19 and there will probably be some unfortunate compatibility trade-offs that are reason enough to still set the flag manually in a SameSite-by-default world.)SameSite Cookies have two flavors: Lax and Strict. When set to Lax, which is what I would recommend that every web application should do, POST requests sent from another host are sent without the Cookie. With Strict all requests, including GET requests, sent from another host are sent without the Cookie. I do not think this is a desirable setting in most cases, as this breaks many workflows and GET requests should not perform any actions anyway.

Now here is a question I have: Are SameSite cookies enough? Do we even need to worry about CSRF tokens any more or can we just skip them? Are there any scenarios where one can bypass SameSite Cookies, but not CSRF tokens?

One could of course say “Why not both?” and see this as a kind of defense in depth. It is a popular mode of thinking to always see more security mechanisms as better, but I do not agree with that reasoning. Security mechanisms introduce complexity and if you can do with less complexity you usually should. CSRF tokens always felt like an ugly solution to me, and I feel SameSite Cookies are a much cleaner way to solve this problem.

So are there situations where SameSite Cookies do not protect and we need tokens? The obvious one is old browsers that do not support SameSite Cookies, however they have been around for a while and if you are willing to not support really old and obscure browsers that should not matter.

A remaining problem I could think of is software that accepts action requests both as GET and POST variables (e. g. in PHP if one uses the $_REQUESTS variable instead of $_POST). These need to be avoided, but using GET for anything that performs actions in the application should not be done anyway. (SameSite=Strict does not really fix his, as GET requests can still plausibly come from links, e. g. on applications that support internal messaging.)

Also an edge case problem may be a transition period: If a web application removes CSRF tokens and starts using SameSite Cookies at the same time Users may still have old Cookies around without the flag. So a transition period at least as long as the Cookie lifetime should be used.

Furthermore there are bypasses for the SameSite-by-default Cookies as planned by browser vendors, but these do not apply when the web application sets the SameSite flag itself. (Essentially the SameSite-by-default Cookies are only SameSite after two minutes, so there is a small window for an attack after setting the Cookie.)

Considering all this if one carefully makes sure that actions can only be performed by POST requests, sets SameSite=Lax on all Cookies and plans a transition period one should be able to safely remove CSRF tokens. Anyone disagrees?

Image sources: Piqsels, Wikimedia Commons

Posted by Hanno Böck

in Cryptography, English, Security, Webdesign

at

21:54

| Comments (8)

| Trackbacks (0)

Thursday, September 7. 2017

In Search of a Secure Time Source

Update: This blogpost was written before NTS was available, and the information is outdated. If you are looking for a modern solution, I recommend using software and a time server with Network Time Security, as specified in RFC 8915.

All our computers and smartphones have an internal clock and need to know the current time. As configuring the time manually is annoying it's common to set the time via Internet services. What tends to get forgotten is that a reasonably accurate clock is often a crucial part of security features like certificate lifetimes or features with expiration times like HSTS. Thus the timesetting should be secure - but usually it isn't.

All our computers and smartphones have an internal clock and need to know the current time. As configuring the time manually is annoying it's common to set the time via Internet services. What tends to get forgotten is that a reasonably accurate clock is often a crucial part of security features like certificate lifetimes or features with expiration times like HSTS. Thus the timesetting should be secure - but usually it isn't.

I'd like my systems to have a secure time. So I'm looking for a timesetting tool that fullfils two requirements:

Although these seem like trivial requirements to my knowledge such a tool doesn't exist. These are relatively loose requirements. One might want to add:

Some people need a very accurate time source, for example for certain scientific use cases. But that's outside of my scope. For the vast majority of use cases a clock that is off by a few seconds doesn't matter. While it's certainly a good idea to consider rogue servers given the current state of things I'd be happy to have a solution where I simply trust a server from Google or any other major Internet entity.

So let's look at what we have:

NTP

The common way of setting the clock is the NTP protocol. NTP itself has no transport security built in. It's a plaintext protocol open to manipulation and man in the middle attacks.

There are two variants of "secure" NTP. "Autokey", an authenticated variant of NTP, is broken. There's also a symmetric authentication, but that is impractical for widespread use, as it would require to negotiate a pre-shared key with the time server in advance.

NTPsec and Ntimed

In response to some vulnerabilities in the reference implementation of NTP two projects started developing "more secure" variants of NTP. Ntimed - a rewrite by Poul-Henning Kamp - and NTPsec, a fork of the original NTP software. Ntimed hasn't seen any development for several years, NTPsec seems active. NTPsec had some controversies with the developers of the original NTP reference implementation and its main developer is - to put it mildly - a controversial character.

But none of that matters. Both projects don't implement a "secure" NTP. The "sec" in NTPsec refers to the security of the code, not to the security of the protocol itself. It's still just an implementation of the old, insecure NTP.

Network Time Security

There's a draft for a new secure variant of NTP - called Network Time Security. It adds authentication to NTP.

However it's just a draft and it seems stalled. It hasn't been updated for over a year. In any case: It's not widely implemented and thus it's currently not usable. If that changes it may be an option.

tlsdate

tlsdate is a hack abusing the timestamp of the TLS protocol. The TLS timestamp of a server can be used to set the system time. This doesn't provide high accuracy, as the timestamp is only given in seconds, but it's good enough.

I've used and advocated tlsdate for a while, but it has some problems. The timestamp in the TLS handshake doesn't really have any meaning within the protocol, so several implementers decided to replace it with a random value. Unfortunately that is also true for the default server hardcoded into tlsdate.

Some Linux distributions still ship a package with a default server that will send random timestamps. The result is that your system time is set to a random value. I reported this to Ubuntu a while ago. It never got fixed, however the latest Ubuntu version Zesty Zapis (17.04) doesn't ship tlsdate any more.

Given that Google has shipped tlsdate for some in ChromeOS time it seems unlikely that Google will send randomized timestamps any time soon. Thus if you use tlsdate with www.google.com it should work for now. But it's no future-proof solution.

TLS 1.3 removes the TLS timestamp, so this whole concept isn't future-proof. Alternatively it supports using an HTTPS timestamp. The development of tlsdate has stalled, it hasn't seen any updates lately. It doesn't build with the latest version of OpenSSL (1.1) So it likely will become unusable soon.

OpenNTPD

OpenNTPD

The developers of OpenNTPD, the NTP daemon from OpenBSD, came up with a nice idea. NTP provides high accuracy, yet no security. Via HTTPS you can get a timestamp with low accuracy. So they combined the two: They use NTP to set the time, but they check whether the given time deviates significantly from an HTTPS host. So the HTTPS host provides safety boundaries for the NTP time.

This would be really nice, if there wasn't a catch: This feature depends on an API only provided by LibreSSL, the OpenBSD fork of OpenSSL. So it's not available on most common Linux systems. (Also why doesn't the OpenNTPD web page support HTTPS?)

Roughtime

Roughtime is a Google project. It fetches the time from multiple servers and uses some fancy cryptography to make sure that malicious servers get detected. If a roughtime server sends a bad time then the client gets a cryptographic proof of the malicious behavior, making it possible to blame and shame rogue servers. Roughtime doesn't provide the high accuracy that NTP provides.

From a security perspective it's the nicest of all solutions. However it fails the availability test. Google provides two reference implementations in C++ and in Go, but it's not packaged for any major Linux distribution. Google has an unfortunate tendency to use unusual dependencies and arcane build systems nobody else uses, so packaging it comes with some challenges.

One line bash script beats all existing solutions

As you can see none of the currently available solutions is really feasible and none fulfils the two mild requirements of authenticity and availability.

This is frustrating given that it's a really simple problem. In fact, it's so simple that you can solve it with a single line bash script:

This line sends an HTTPS request to Google, fetches the date header from the response and passes that to the date command line utility.

It provides authenticity via TLS. If the current system time is far off then this fails, as the TLS connection relies on the validity period of the current certificate. Google currently uses certificates with a validity of around three months. The accuracy is only in seconds, so it doesn't qualify for high accuracy requirements. There's no protection against a rogue Google server providing a wrong time.

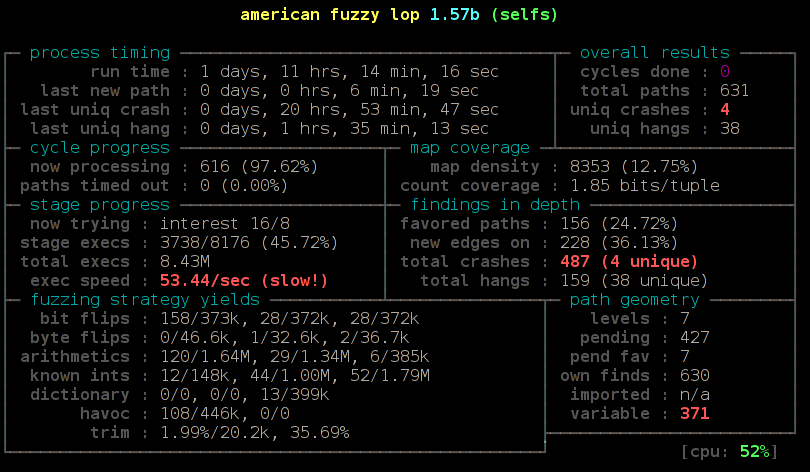

Another potential security concern may be that Google might attack the parser of the date setting tool by serving a malformed date string. However I ran american fuzzy lop against it and it looks robust.

While this certainly isn't as accurate as NTP or as secure as roughtime, it's better than everything else that's available. I put this together in a slightly more advanced bash script called httpstime.

All our computers and smartphones have an internal clock and need to know the current time. As configuring the time manually is annoying it's common to set the time via Internet services. What tends to get forgotten is that a reasonably accurate clock is often a crucial part of security features like certificate lifetimes or features with expiration times like HSTS. Thus the timesetting should be secure - but usually it isn't.

All our computers and smartphones have an internal clock and need to know the current time. As configuring the time manually is annoying it's common to set the time via Internet services. What tends to get forgotten is that a reasonably accurate clock is often a crucial part of security features like certificate lifetimes or features with expiration times like HSTS. Thus the timesetting should be secure - but usually it isn't.I'd like my systems to have a secure time. So I'm looking for a timesetting tool that fullfils two requirements:

- It provides authenticity of the time and is not vulnerable to man in the middle attacks.

- It is widely available on common Linux systems.

Although these seem like trivial requirements to my knowledge such a tool doesn't exist. These are relatively loose requirements. One might want to add:

- The timesetting needs to provide a good accuracy.

- The timesetting needs to be protected against malicious time servers.

Some people need a very accurate time source, for example for certain scientific use cases. But that's outside of my scope. For the vast majority of use cases a clock that is off by a few seconds doesn't matter. While it's certainly a good idea to consider rogue servers given the current state of things I'd be happy to have a solution where I simply trust a server from Google or any other major Internet entity.

So let's look at what we have:

NTP

The common way of setting the clock is the NTP protocol. NTP itself has no transport security built in. It's a plaintext protocol open to manipulation and man in the middle attacks.

There are two variants of "secure" NTP. "Autokey", an authenticated variant of NTP, is broken. There's also a symmetric authentication, but that is impractical for widespread use, as it would require to negotiate a pre-shared key with the time server in advance.

NTPsec and Ntimed

In response to some vulnerabilities in the reference implementation of NTP two projects started developing "more secure" variants of NTP. Ntimed - a rewrite by Poul-Henning Kamp - and NTPsec, a fork of the original NTP software. Ntimed hasn't seen any development for several years, NTPsec seems active. NTPsec had some controversies with the developers of the original NTP reference implementation and its main developer is - to put it mildly - a controversial character.

But none of that matters. Both projects don't implement a "secure" NTP. The "sec" in NTPsec refers to the security of the code, not to the security of the protocol itself. It's still just an implementation of the old, insecure NTP.

Network Time Security

There's a draft for a new secure variant of NTP - called Network Time Security. It adds authentication to NTP.

However it's just a draft and it seems stalled. It hasn't been updated for over a year. In any case: It's not widely implemented and thus it's currently not usable. If that changes it may be an option.

tlsdate

tlsdate is a hack abusing the timestamp of the TLS protocol. The TLS timestamp of a server can be used to set the system time. This doesn't provide high accuracy, as the timestamp is only given in seconds, but it's good enough.

I've used and advocated tlsdate for a while, but it has some problems. The timestamp in the TLS handshake doesn't really have any meaning within the protocol, so several implementers decided to replace it with a random value. Unfortunately that is also true for the default server hardcoded into tlsdate.

Some Linux distributions still ship a package with a default server that will send random timestamps. The result is that your system time is set to a random value. I reported this to Ubuntu a while ago. It never got fixed, however the latest Ubuntu version Zesty Zapis (17.04) doesn't ship tlsdate any more.

Given that Google has shipped tlsdate for some in ChromeOS time it seems unlikely that Google will send randomized timestamps any time soon. Thus if you use tlsdate with www.google.com it should work for now. But it's no future-proof solution.

TLS 1.3 removes the TLS timestamp, so this whole concept isn't future-proof. Alternatively it supports using an HTTPS timestamp. The development of tlsdate has stalled, it hasn't seen any updates lately. It doesn't build with the latest version of OpenSSL (1.1) So it likely will become unusable soon.

OpenNTPD

OpenNTPDThe developers of OpenNTPD, the NTP daemon from OpenBSD, came up with a nice idea. NTP provides high accuracy, yet no security. Via HTTPS you can get a timestamp with low accuracy. So they combined the two: They use NTP to set the time, but they check whether the given time deviates significantly from an HTTPS host. So the HTTPS host provides safety boundaries for the NTP time.

This would be really nice, if there wasn't a catch: This feature depends on an API only provided by LibreSSL, the OpenBSD fork of OpenSSL. So it's not available on most common Linux systems. (Also why doesn't the OpenNTPD web page support HTTPS?)

Roughtime

Roughtime is a Google project. It fetches the time from multiple servers and uses some fancy cryptography to make sure that malicious servers get detected. If a roughtime server sends a bad time then the client gets a cryptographic proof of the malicious behavior, making it possible to blame and shame rogue servers. Roughtime doesn't provide the high accuracy that NTP provides.

From a security perspective it's the nicest of all solutions. However it fails the availability test. Google provides two reference implementations in C++ and in Go, but it's not packaged for any major Linux distribution. Google has an unfortunate tendency to use unusual dependencies and arcane build systems nobody else uses, so packaging it comes with some challenges.

One line bash script beats all existing solutions

As you can see none of the currently available solutions is really feasible and none fulfils the two mild requirements of authenticity and availability.

This is frustrating given that it's a really simple problem. In fact, it's so simple that you can solve it with a single line bash script:

date -s "$(curl -sI https://www.google.com/|grep -i 'date:'|sed -e 's/^.ate: //g')"This line sends an HTTPS request to Google, fetches the date header from the response and passes that to the date command line utility.

It provides authenticity via TLS. If the current system time is far off then this fails, as the TLS connection relies on the validity period of the current certificate. Google currently uses certificates with a validity of around three months. The accuracy is only in seconds, so it doesn't qualify for high accuracy requirements. There's no protection against a rogue Google server providing a wrong time.

Another potential security concern may be that Google might attack the parser of the date setting tool by serving a malformed date string. However I ran american fuzzy lop against it and it looks robust.

While this certainly isn't as accurate as NTP or as secure as roughtime, it's better than everything else that's available. I put this together in a slightly more advanced bash script called httpstime.

Posted by Hanno Böck

in Code, Cryptography, English, Linux, Security

at

17:07

| Comments (6)

| Trackbacks (0)

Thursday, July 20. 2017

How I tricked Symantec with a Fake Private Key

Lately, some attention was drawn to a widespread problem with TLS certificates. Many people are accidentally publishing their private keys. Sometimes they are released as part of applications, in Github repositories or with common filenames on web servers.

Lately, some attention was drawn to a widespread problem with TLS certificates. Many people are accidentally publishing their private keys. Sometimes they are released as part of applications, in Github repositories or with common filenames on web servers.If a private key is compromised, a certificate authority is obliged to revoke it. The Baseline Requirements – a set of rules that browsers and certificate authorities agreed upon – regulate this and say that in such a case a certificate authority shall revoke the key within 24 hours (Section 4.9.1.1 in the current Baseline Requirements 1.4.8). These rules exist despite the fact that revocation has various problems and doesn’t work very well, but that’s another topic.

I reported various key compromises to certificate authorities recently and while not all of them reacted in time, they eventually revoked all certificates belonging to the private keys. I wondered however how thorough they actually check the key compromises. Obviously one would expect that they cryptographically verify that an exposed private key really is the private key belonging to a certificate.

I registered two test domains at a provider that would allow me to hide my identity and not show up in the whois information. I then ordered test certificates from Symantec (via their brand RapidSSL) and Comodo. These are the biggest certificate authorities and they both offer short term test certificates for free. I then tried to trick them into revoking those certificates with a fake private key.

Forging a private key

To understand this we need to get a bit into the details of RSA keys. In essence a cryptographic key is just a set of numbers. For RSA a public key consists of a modulus (usually named N) and a public exponent (usually called e). You don’t have to understand their mathematical meaning, just keep in mind: They’re nothing more than numbers.

An RSA private key is also just numbers, but more of them. If you have heard any introductory RSA descriptions you may know that a private key consists of a private exponent (called d), but in practice it’s a bit more. Private keys usually contain the full public key (N, e), the private exponent (d) and several other values that are redundant, but they are useful to speed up certain things. But just keep in mind that a public key consists of two numbers and a private key is a public key plus some additional numbers. A certificate ultimately is just a public key with some additional information (like the host name that says for which web page it’s valid) signed by a certificate authority.

A naive check whether a private key belongs to a certificate could be done by extracting the public key parts of both the certificate and the private key for comparison. However it is quite obvious that this isn’t secure. An attacker could construct a private key that contains the public key of an existing certificate and the private key parts of some other, bogus key. Obviously such a fake key couldn’t be used and would only produce errors, but it would survive such a naive check.

I created such fake keys for both domains and uploaded them to Pastebin. If you want to create such fake keys on your own here’s a script. To make my report less suspicious I searched Pastebin for real, compromised private keys belonging to certificates. This again shows how problematic the leakage of private keys is: I easily found seven private keys for Comodo certificates and three for Symantec certificates, plus several more for other certificate authorities, which I also reported. These additional keys allowed me to make my report to Symantec and Comodo less suspicious: I could hide my fake key report within other legitimate reports about a key compromise.

Symantec revoked a certificate based on a forged private key

Comodo didn’t fall for it. They answered me that there is something wrong with this key. Symantec however answered me that they revoked all certificates – including the one with the fake private key.

Comodo didn’t fall for it. They answered me that there is something wrong with this key. Symantec however answered me that they revoked all certificates – including the one with the fake private key.No harm was done here, because the certificate was only issued for my own test domain. But I could’ve also fake private keys of other peoples' certificates. Very likely Symantec would have revoked them as well, causing downtimes for those sites. I even could’ve easily created a fake key belonging to Symantec’s own certificate.

The communication by Symantec with the domain owner was far from ideal. I first got a mail that they were unable to process my order. Then I got another mail about a “cancellation request”. They didn’t explain what really happened and that the revocation happened due to a key uploaded on Pastebin.

I then informed Symantec about the invalid key (from my “real” identity), claiming that I just noted there’s something wrong with it. At that point they should’ve been aware that they revoked the certificate in error. Then I contacted the support with my “domain owner” identity and asked why the certificate was revoked. The answer: “I wanted to inform you that your FreeSSL certificate was cancelled as during a log check it was determined that the private key was compromised.”

To summarize: Symantec never told the domain owner that the certificate was revoked due to a key leaked on Pastebin. I assume in all the other cases they also didn’t inform their customers. Thus they may have experienced a certificate revocation, but don’t know why. So they can’t learn and can’t improve their processes to make sure this doesn’t happen again. Also, Symantec still insisted to the domain owner that the key was compromised even after I already had informed them that the key was faulty.

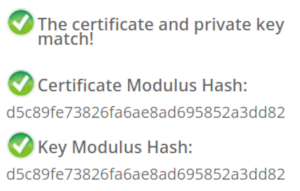

How to check if a private key belongs to a certificate?

In case you wonder how you properly check whether a private key belongs to a certificate you may of course resort to a Google search. And this was fascinating – and scary – to me: I searched Google for “check if private key matches certificate”. I got plenty of instructions. Almost all of them were wrong. The first result is a page from SSLShopper. They recommend to compare the MD5 hash of the modulus. That they use MD5 is not the problem here, the problem is that this is a naive check only comparing parts of the public key. They even provide a form to check this. (That they ask you to put your private key into a form is a different issue on its own, but at least they have a warning about this and recommend to check locally.)

In case you wonder how you properly check whether a private key belongs to a certificate you may of course resort to a Google search. And this was fascinating – and scary – to me: I searched Google for “check if private key matches certificate”. I got plenty of instructions. Almost all of them were wrong. The first result is a page from SSLShopper. They recommend to compare the MD5 hash of the modulus. That they use MD5 is not the problem here, the problem is that this is a naive check only comparing parts of the public key. They even provide a form to check this. (That they ask you to put your private key into a form is a different issue on its own, but at least they have a warning about this and recommend to check locally.)Furthermore we get the same wrong instructions from the University of Wisconsin, Comodo (good that their engineers were smart enough not to rely on their own documentation), tbs internet (“SSL expert since 1996”), ShellHacks, IBM and RapidSSL (aka Symantec). A post on Stackexchange is the only result that actually mentions a proper check for RSA keys. Two more Stackexchange posts are not related to RSA, I haven’t checked their solutions in detail.

Going to Google results page two among some unrelated links we find more wrong instructions and tools from Symantec (Update 2020: Link offline), SSL247 (“Symantec Specialist Partner Website Security” - they learned from the best) and some private blog. A documentation by Aspera (belonging to IBM) at least mentions that you can check the private key, but in an unrelated section of the document. Also we get more tools that ask you to upload your private key and then not properly check it from SSLChecker.com, the SSL Store (Symantec “Website Security Platinum Partner”), GlobeSSL (“in SSL we trust”) and - well - RapidSSL.

Documented Security Vulnerability in OpenSSL

So if people google for instructions they’ll almost inevitably end up with non-working instructions or tools. But what about other options? Let’s say we want to automate this and have a tool that verifies whether a certificate matches a private key using OpenSSL. We may end up finding that OpenSSL has a function

x509_check_private_key() that can be used to “check the consistency of a private key with the public key in an X509 certificate or certificate request”. Sounds like exactly what we need, right?Well, until you read the full docs and find out that it has a BUGS section: “The check_private_key functions don't check if k itself is indeed a private key or not. It merely compares the public materials (e.g. exponent and modulus of an RSA key) and/or key parameters (e.g. EC params of an EC key) of a key pair.”

I think this is a security vulnerability in OpenSSL (discussion with OpenSSL here). And that doesn’t change just because it’s a documented security vulnerability. Notably there are downstream consumers of this function that failed to copy that part of the documentation, see for example the corresponding PHP function (the limitation is however mentioned in a comment by a user).

So how do you really check whether a private key matches a certificate?

Ultimately there are two reliable ways to check whether a private key belongs to a certificate. One way is to check whether the various values of the private key are consistent and then check whether the public key matches. For example a private key contains values p and q that are the prime factors of the public modulus N. If you multiply them and compare them to N you can be sure that you have a legitimate private key. It’s one of the core properties of RSA that it’s secure based on the assumption that it’s not feasible to calculate p and q from N.

You can use OpenSSL to check the consistency of a private key:

openssl rsa -in [privatekey] -checkFor my forged keys it will tell you:

RSA key error: n does not equal p qYou can then compare the public key, for example by calculating the so-called SPKI SHA256 hash:

openssl pkey -in [privatekey] -pubout -outform der | sha256sumopenssl x509 -in [certificate] -pubkey |openssl pkey -pubin -pubout -outform der | sha256sumAnother way is to sign a message with the private key and then verify it with the public key. You could do it like this:

openssl x509 -in [certificate] -noout -pubkey > pubkey.pemdd if=/dev/urandom of=rnd bs=32 count=1openssl rsautl -sign -pkcs -inkey [privatekey] -in rnd -out sigopenssl rsautl -verify -pkcs -pubin -inkey pubkey.pem -in sig -out checkcmp rnd checkrm rnd check sig pubkey.pemIf cmp produces no output then the signature matches.

As this is all quite complex due to OpenSSLs arcane command line interface I have put this all together in a script. You can pass a certificate and a private key, both in ASCII/PEM format, and it will do both checks.

Summary

Symantec did a major blunder by revoking a certificate based on completely forged evidence. There’s hardly any excuse for this and it indicates that they operate a certificate authority without a proper understanding of the cryptographic background.

Apart from that the problem of checking whether a private key and certificate match seems to be largely documented wrong. Plenty of erroneous guides and tools may cause others to fall for the same trap.

Update: Symantec answered with a blog post.

Posted by Hanno Böck

in Cryptography, English, Linux, Security

at

16:58

| Comments (12)

| Trackback (1)

Defined tags for this entry: ca, certificate, certificateauthority, openssl, privatekey, rsa, ssl, symantec, tls, x509

Friday, May 19. 2017

The Problem with OCSP Stapling and Must Staple and why Certificate Revocation is still broken

Update (2020-09-16): While three years old, people still find this blog post when looking for information about Stapling problems. For Apache the situation has improved considerably in the meantime: mod_md, which is part of recent apache releases, comes with a new stapling implementation which you can enable with the setting MDStapling on.

Today the OCSP servers from Let’s Encrypt were offline for a while. This has caused far more trouble than it should have, because in theory we have all the technologies available to handle such an incident. However due to failures in how they are implemented they don’t really work.

We have to understand some background. Encrypted connections using the TLS protocol like HTTPS use certificates. These are essentially cryptographic public keys together with a signed statement from a certificate authority that they belong to a certain host name.

CRL and OCSP – two technologies that don’t work

Certificates can be revoked. That means that for some reason the certificate should no longer be used. A typical scenario is when a certificate owner learns that his servers have been hacked and his private keys stolen. In this case it’s good to avoid that the stolen keys and their corresponding certificates can still be used. Therefore a TLS client like a browser should check that a certificate provided by a server is not revoked.

That’s the theory at least. However the history of certificate revocation is a history of two technologies that don’t really work.

One method are certificate revocation lists (CRLs). It’s quite simple: A certificate authority provides a list of certificates that are revoked. This has an obvious limitation: These lists can grow. Given that a revocation check needs to happen during a connection it’s obvious that this is non-workable in any realistic scenario.

The second method is called OCSP (Online Certificate Status Protocol). Here a client can query a server about the status of a single certificate and will get a signed answer. This avoids the size problem of CRLs, but it still has a number of problems. Given that connections should be fast it’s quite a high cost for a client to make a connection to an OCSP server during each handshake. It’s also concerning for privacy, as it gives the operator of an OCSP server a lot of information.

However there’s a more severe problem: What happens if an OCSP server is not available? From a security point of view one could say that a certificate that can’t be OCSP-checked should be considered invalid. However OCSP servers are far too unreliable. So practically all clients implement OCSP in soft fail mode (or not at all). Soft fail means that if the OCSP server is not available the certificate is considered valid.

That makes the whole OCSP concept pointless: If an attacker tries to abuse a stolen, revoked certificate he can just block the connection to the OCSP server – and thus a client can’t learn that it’s revoked. Due to this inherent security failure Chrome decided to disable OCSP checking altogether. As a workaround they have something called CRLsets and Mozilla has something similar called OneCRL, which is essentially a big revocation list for important revocations managed by the browser vendor. However this is a weak workaround that doesn’t cover most certificates.

OCSP Stapling and Must Staple to the rescue?

There are two technologies that could fix this: OCSP Stapling and Must-Staple.

OCSP Stapling moves the querying of the OCSP server from the client to the server. The server gets OCSP replies and then sends them within the TLS handshake. This has several advantages: It avoids the latency and privacy implications of OCSP. It also allows surviving short downtimes of OCSP servers, because a TLS server can cache OCSP replies (they’re usually valid for several days).

However it still does not solve the security issue: If an attacker has a stolen, revoked certificate it can be used without Stapling. The browser won’t know about it and will query the OCSP server, this request can again be blocked by the attacker and the browser will accept the certificate.

Therefore an extension for certificates has been introduced that allows us to require Stapling. It’s usually called OCSP Must-Staple and is defined in RFC 7633 (although the RFC doesn’t mention the name Must-Staple, which can cause some confusion). If a browser sees a certificate with this extension that is used without OCSP Stapling it shouldn’t accept it.

So we should be fine. With OCSP Stapling we can avoid the latency and privacy issues of OCSP and we can avoid failing when OCSP servers have short downtimes. With OCSP Must-Staple we fix the security problems. No more soft fail. All good, right?

The OCSP Stapling implementations of Apache and Nginx are broken

Well, here come the implementations. While a lot of protocols use TLS, the most common use case is the web and HTTPS. According to Netcraft statistics by far the biggest share of active sites on the Internet run on Apache (about 46%), followed by Nginx (about 20 %). It’s reasonable to say that if these technologies should provide a solution for revocation they should be usable with the major products in that area. On the server side this is only OCSP Stapling, as OCSP Must Staple only needs to be checked by the client.

What would you expect from a working OCSP Stapling implementation? It should try to avoid a situation where it’s unable to send out a valid OCSP response. Thus roughly what it should do is to fetch a valid OCSP response as soon as possible and cache it until it gets a new one or it expires. It should furthermore try to fetch a new OCSP response long before the old one expires (ideally several days). And it should never throw away a valid response unless it has a newer one. Google developer Ryan Sleevi wrote a detailed description of what a proper OCSP Stapling implementation could look like.

Apache does none of this.

If Apache tries to renew the OCSP response and gets an error from the OCSP server – e. g. because it’s currently malfunctioning – it will throw away the existing, still valid OCSP response and replace it with the error. It will then send out stapled OCSP errors. Which makes zero sense. Firefox will show an error if it sees this. This has been reported in 2014 and is still unfixed.

Now there’s an option in Apache to avoid this behavior: SSLStaplingReturnResponderErrors. It’s defaulting to on. If you switch it off you won’t get sane behavior (that is – use the still valid, cached response), instead Apache will disable Stapling for the time it gets errors from the OCSP server. That’s better than sending out errors, but it obviously makes using Must Staple a no go.

It gets even crazier. I have set this option, but this morning I still got complaints that Firefox users were seeing errors. That’s because in this case the OCSP server wasn’t sending out errors, it was completely unavailable. For that situation Apache has a feature that will fake a tryLater error to send out to the client. If you’re wondering how that makes any sense: It doesn’t. The “tryLater” error of OCSP isn’t useful at all in TLS, because you can’t try later during a handshake which only lasts seconds.

This is controlled by another option: SSLStaplingFakeTryLater. However if we read the documentation it says “Only effective if SSLStaplingReturnResponderErrors is also enabled.” So if we disabled SSLStapingReturnResponderErrors this shouldn’t matter, right? Well: The documentation is wrong.

There are more problems: Apache doesn’t get the OCSP responses on startup, it only fetches them during the handshake. This causes extra latency on the first connection and increases the risk of hitting a situation where you don’t have a valid OCSP response. Also cached OCSP responses don’t survive server restarts, they’re kept in an in-memory cache.

There’s currently no way to configure Apache to handle OCSP stapling in a reasonable way. Here’s the configuration I use, which will at least make sure that it won’t send out errors and cache the responses a bit longer than it does by default:

I’m less familiar with Nginx, but from what I hear it isn’t much better either. According to this blogpost it doesn’t fetch OCSP responses on startup and will send out the first TLS connections without stapling even if it’s enabled. Here’s a blog post that recommends to work around this by connecting to all configured hosts after the server has started.

To summarize: This is all a big mess. Both Apache and Nginx have OCSP Stapling implementations that are essentially broken. As long as you’re using either of those then enabling Must-Staple is a reliable way to shoot yourself in the foot and get into trouble. Don’t enable it if you plan to use Apache or Nginx.

Certificate revocation is broken. It has been broken since the invention of SSL and it’s still broken. OCSP Stapling and OCSP Must-Staple could fix it in theory. But that would require working and stable implementations in the most widely used server products.

Today the OCSP servers from Let’s Encrypt were offline for a while. This has caused far more trouble than it should have, because in theory we have all the technologies available to handle such an incident. However due to failures in how they are implemented they don’t really work.

We have to understand some background. Encrypted connections using the TLS protocol like HTTPS use certificates. These are essentially cryptographic public keys together with a signed statement from a certificate authority that they belong to a certain host name.

CRL and OCSP – two technologies that don’t work

Certificates can be revoked. That means that for some reason the certificate should no longer be used. A typical scenario is when a certificate owner learns that his servers have been hacked and his private keys stolen. In this case it’s good to avoid that the stolen keys and their corresponding certificates can still be used. Therefore a TLS client like a browser should check that a certificate provided by a server is not revoked.

That’s the theory at least. However the history of certificate revocation is a history of two technologies that don’t really work.

One method are certificate revocation lists (CRLs). It’s quite simple: A certificate authority provides a list of certificates that are revoked. This has an obvious limitation: These lists can grow. Given that a revocation check needs to happen during a connection it’s obvious that this is non-workable in any realistic scenario.

The second method is called OCSP (Online Certificate Status Protocol). Here a client can query a server about the status of a single certificate and will get a signed answer. This avoids the size problem of CRLs, but it still has a number of problems. Given that connections should be fast it’s quite a high cost for a client to make a connection to an OCSP server during each handshake. It’s also concerning for privacy, as it gives the operator of an OCSP server a lot of information.

However there’s a more severe problem: What happens if an OCSP server is not available? From a security point of view one could say that a certificate that can’t be OCSP-checked should be considered invalid. However OCSP servers are far too unreliable. So practically all clients implement OCSP in soft fail mode (or not at all). Soft fail means that if the OCSP server is not available the certificate is considered valid.

That makes the whole OCSP concept pointless: If an attacker tries to abuse a stolen, revoked certificate he can just block the connection to the OCSP server – and thus a client can’t learn that it’s revoked. Due to this inherent security failure Chrome decided to disable OCSP checking altogether. As a workaround they have something called CRLsets and Mozilla has something similar called OneCRL, which is essentially a big revocation list for important revocations managed by the browser vendor. However this is a weak workaround that doesn’t cover most certificates.

OCSP Stapling and Must Staple to the rescue?

There are two technologies that could fix this: OCSP Stapling and Must-Staple.

OCSP Stapling moves the querying of the OCSP server from the client to the server. The server gets OCSP replies and then sends them within the TLS handshake. This has several advantages: It avoids the latency and privacy implications of OCSP. It also allows surviving short downtimes of OCSP servers, because a TLS server can cache OCSP replies (they’re usually valid for several days).

However it still does not solve the security issue: If an attacker has a stolen, revoked certificate it can be used without Stapling. The browser won’t know about it and will query the OCSP server, this request can again be blocked by the attacker and the browser will accept the certificate.

Therefore an extension for certificates has been introduced that allows us to require Stapling. It’s usually called OCSP Must-Staple and is defined in RFC 7633 (although the RFC doesn’t mention the name Must-Staple, which can cause some confusion). If a browser sees a certificate with this extension that is used without OCSP Stapling it shouldn’t accept it.

So we should be fine. With OCSP Stapling we can avoid the latency and privacy issues of OCSP and we can avoid failing when OCSP servers have short downtimes. With OCSP Must-Staple we fix the security problems. No more soft fail. All good, right?

The OCSP Stapling implementations of Apache and Nginx are broken

Well, here come the implementations. While a lot of protocols use TLS, the most common use case is the web and HTTPS. According to Netcraft statistics by far the biggest share of active sites on the Internet run on Apache (about 46%), followed by Nginx (about 20 %). It’s reasonable to say that if these technologies should provide a solution for revocation they should be usable with the major products in that area. On the server side this is only OCSP Stapling, as OCSP Must Staple only needs to be checked by the client.

What would you expect from a working OCSP Stapling implementation? It should try to avoid a situation where it’s unable to send out a valid OCSP response. Thus roughly what it should do is to fetch a valid OCSP response as soon as possible and cache it until it gets a new one or it expires. It should furthermore try to fetch a new OCSP response long before the old one expires (ideally several days). And it should never throw away a valid response unless it has a newer one. Google developer Ryan Sleevi wrote a detailed description of what a proper OCSP Stapling implementation could look like.

Apache does none of this.